A different premise

Most AI image reviews focus on outputs—how realistic they look, how fast they render, or which model “wins.” That approach is useful, but it overlooks a more revealing question: what actually happens as you increase the number of images a model has to work with?

Instead of comparing results, this article looks at something deeper—control under image load.

Because as you move from 0 to 4 images, something subtle but important begins to shift. The model doesn’t simply gain more context. Rather, it starts to change how it handles images altogether.

At a certain point, the model is no longer “using” images.

It is reconstructing them.

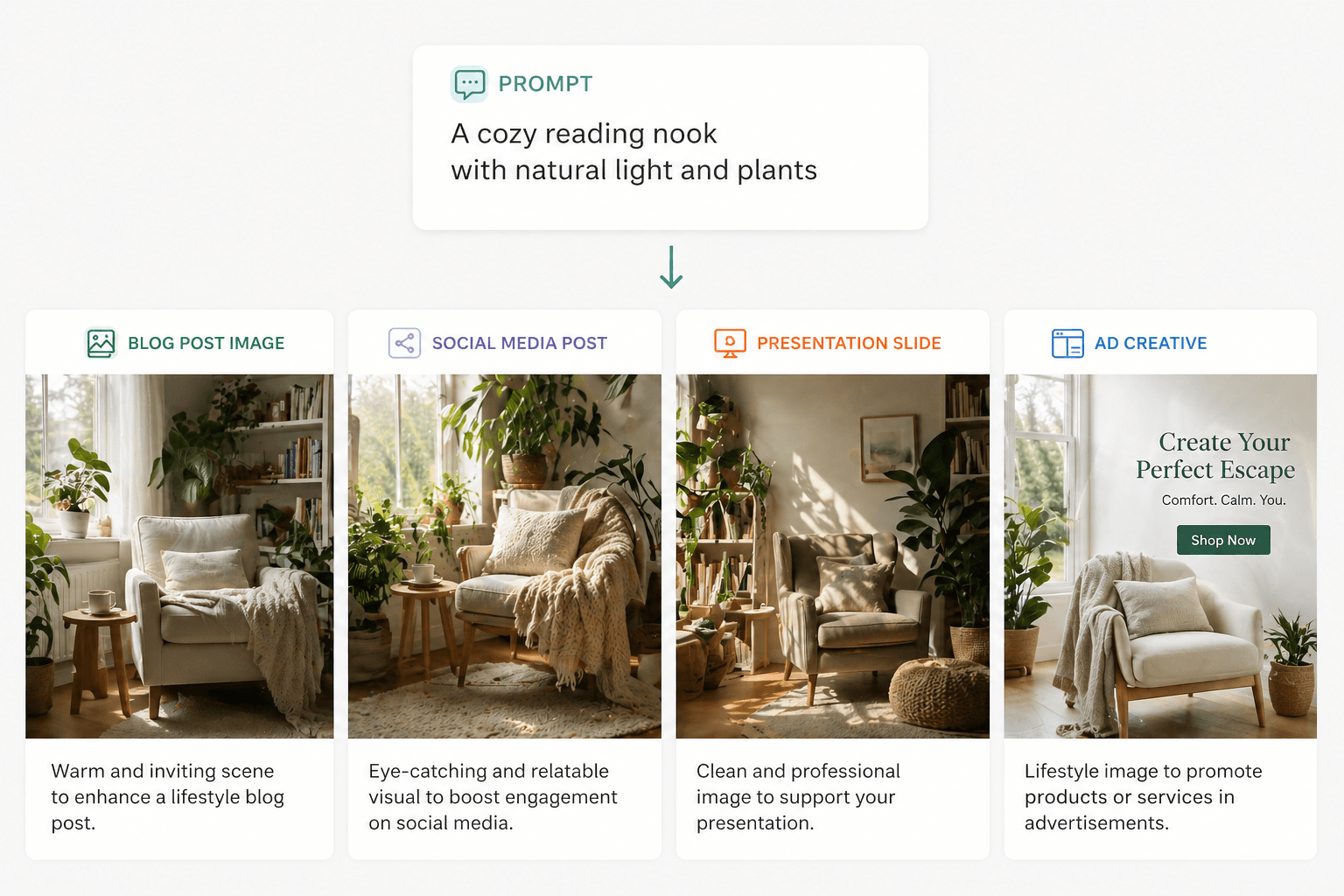

0 Images

The illusion of understanding

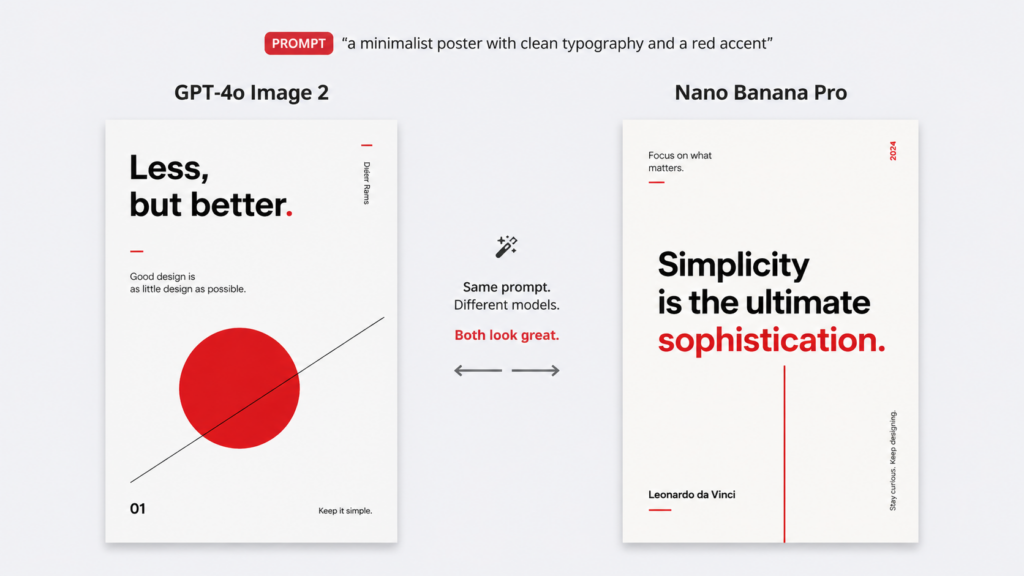

When no images are provided, everything appears to work smoothly. The model seems capable of understanding your prompt and turning it into a coherent visual output.

However, what’s really happening is more limited than it seems. The model is not interpreting reality—it is constructing a plausible visual scene based entirely on language.

This is why, at this stage, both GPT Image 2 and Nano Banana Pro perform well. OpenAI emphasizes layout, text rendering, and instruction-following, while Google highlights precision and control. With no visual constraints, both models can fully express their strengths.

At the same time, this also means something important is missing:

There is nothing pushing back against the model yet.

What users actually notice

“The quality jump is ridiculous.”

— Reddit user, reacting to GPT Image 2

People talk about sharpness, realism, and style. Very few mention control, consistency, or fidelity—because none of those are being meaningfully tested yet.

This is a useful clue, because it shows how people naturally evaluate image models before they become technically demanding. At zero images, users reward confidence. They want the image to feel coherent, polished, and visually complete. In other words, they are judging whether the model can create the impression of understanding before there is any real constraint to challenge it.

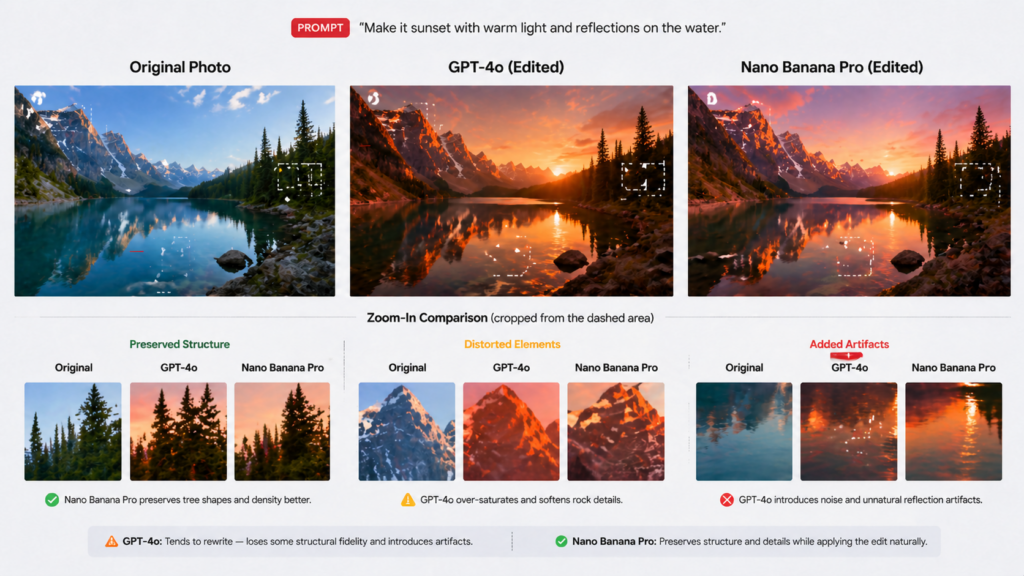

1 Image

The first real conflict

Once a single image is introduced, the task changes completely.

Now the model must decide how to treat that image. Should it preserve the original structure, or reinterpret it according to the prompt? In practice, most models do not simply “edit” images—they negotiate between two competing forces: the input image and the instruction.

This is where things start to break in subtle ways.

What users report

“It kind of overlays over the reference image… you can see it shimmer through.”

— OpenAI Community

“I attached more reference photos of myself.”

— Reddit user

What this reveals

Taken together, these observations point to the same issue. The model is not truly modifying the image; instead, it is generating a new image around it.

That is why one-image workflows are often more fragile than they look. They expose whether the model is capable of subtle control or whether it tends to replace the source with a newly generated approximation. For users, that difference is not cosmetic. It decides whether the model feels like a real editing tool or just a generator that happens to accept images.

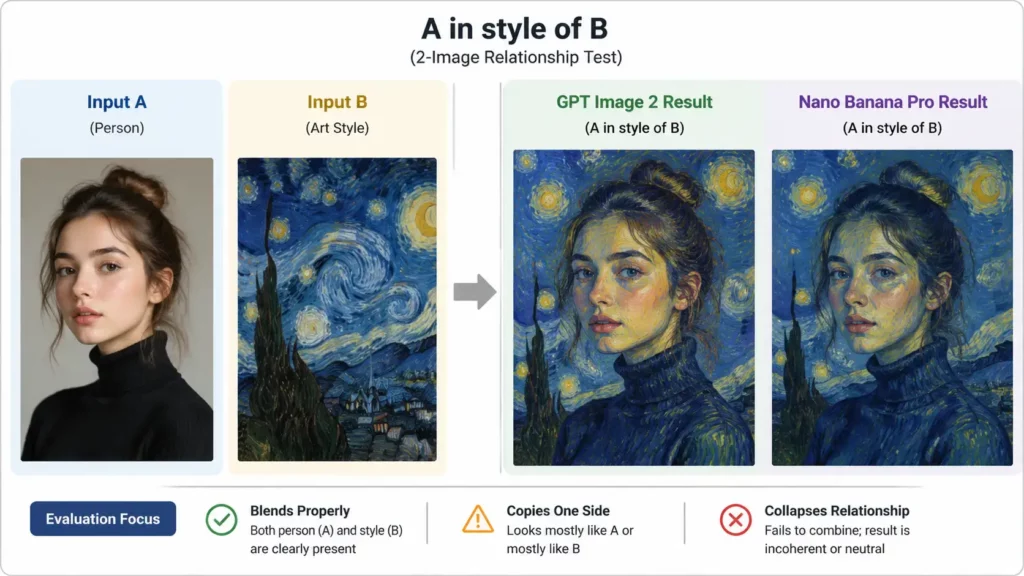

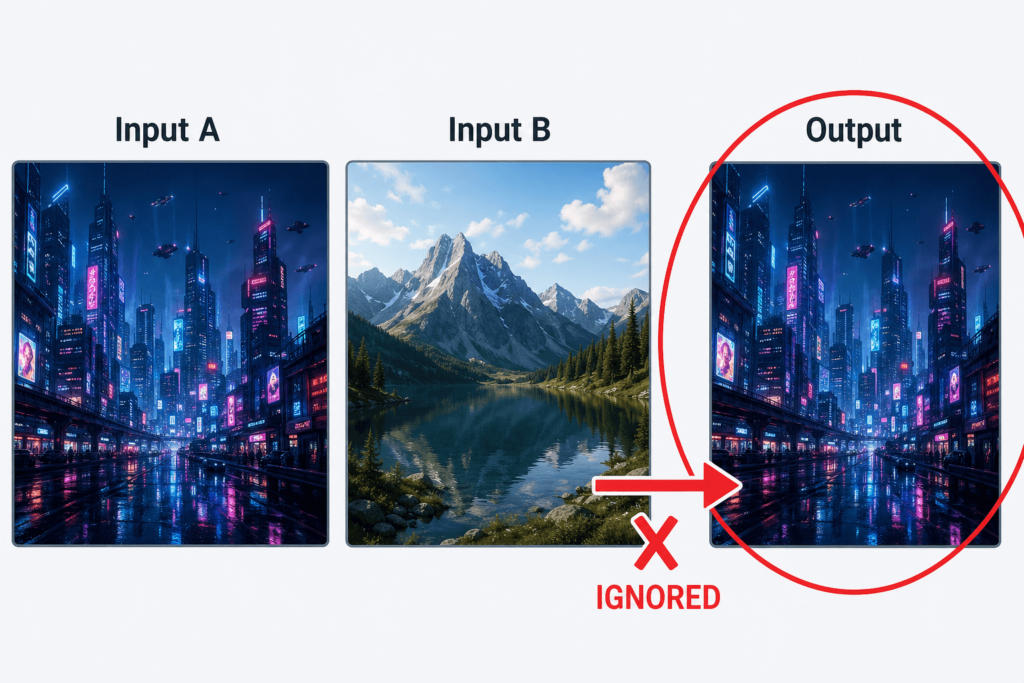

2 Images

Where things start to break

With two images, the model is no longer dealing with a single source of truth. Instead, it must understand and resolve a relationship.

A common failure pattern

“It just spits out a duplicate of one of the references.”

— Reddit user testing multi-image prompts

Key insight

Multi-image capability is often described as “fusion.”

In reality, it is a test of conflict resolution.

That is why the word “fusion” can be misleading. Fusion sounds like a creative blend, but in many cases the model is not blending at all. It is simplifying. It removes friction by choosing the easier path, which is often to let one source dominate. The output may look complete, but the logic behind it is thinner than it appears.

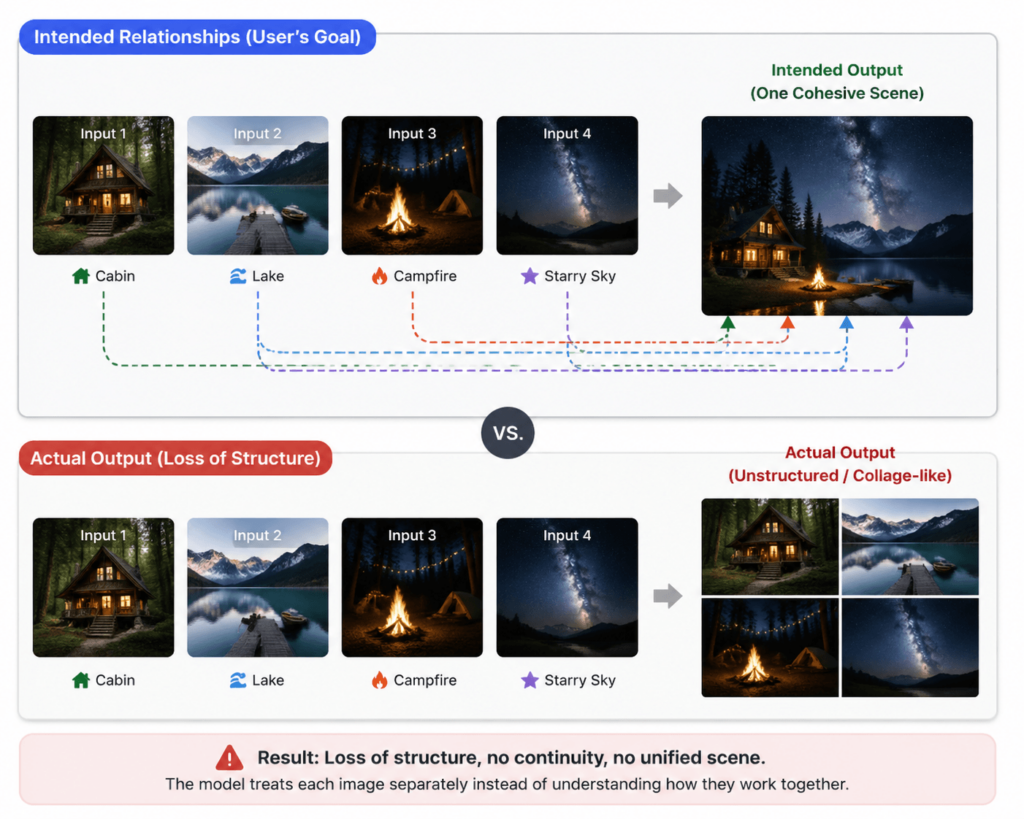

3–4 Images

The point where control starts to slip

When the number of input images reaches three or four, the problem changes once again.

What users are actually asking for

“Multi-image continuity (n=8)”

— Reddit discussion

“How do I get individual outputs instead of a collage?”

— Nano Banana user

Key insight

Beyond three images, the challenge is no longer creativity.

It is stability under complexity.

Once the input reaches three or four images, the task becomes less forgiving. Now the model has to preserve multiple relationships at once, and every additional image increases the chance that something important will be lost. Some outputs begin to feel over-combined, while others feel as if the model has merged everything into a single generic structure.

At this stage, the best results are not necessarily the most impressive-looking ones. They are the ones that still preserve boundaries. If the model can keep separate inputs recognizable while still producing a coherent whole, then it is doing something genuinely useful. If not, the output may be visually rich but structurally weak.

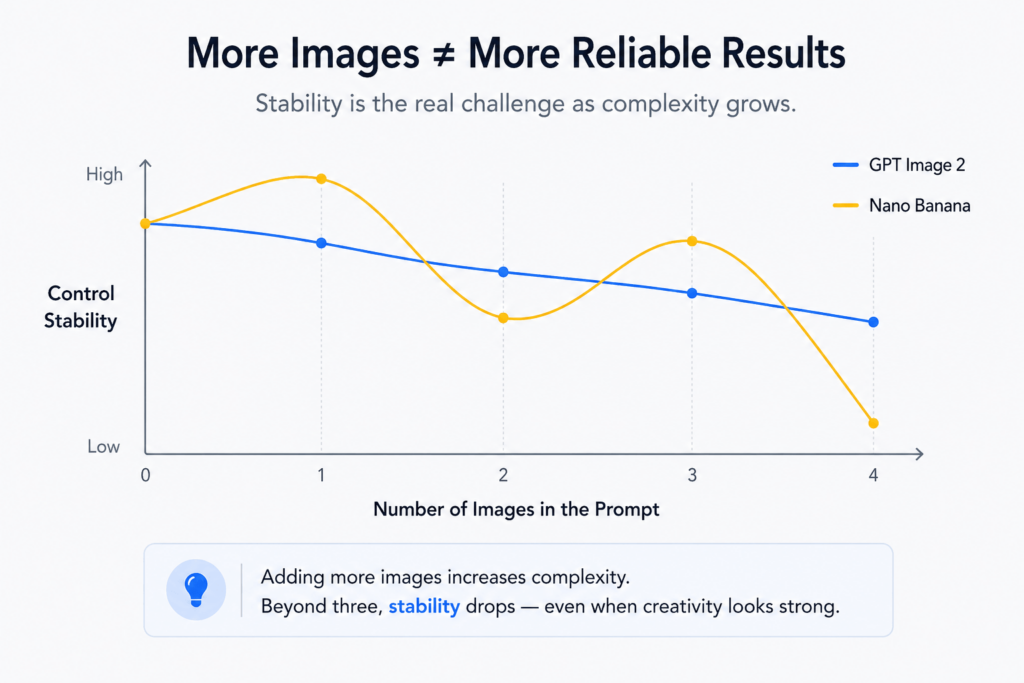

A hidden curve

Final thought

It is tempting to assume that giving a model more images will make it more accurate. In practice, the opposite often happens. More input can mean more ambiguity, more conflict, and more chances for the model to simplify the task in ways that reduce control.

That is why the real question is not whether the model can generate something impressive. It is whether it can keep the structure intact as the visual load increases. At that point, the model is no longer just making an image. It is trying to manage a system of relationships. And that is where its real limits begin to show.

Go to WeShop AI For Exploration: