This Is Not Just About Better Images

If you frame GPT Image 2 as just another step forward in image quality, the argument quickly becomes forgettable. Yes, the outputs are cleaner, more coherent, and easier to control—but that alone is not what’s changing the landscape.

What’s actually shifting is more subtle, and far more structural. This model doesn’t just improve how images look; it changes how style behaves, how it moves, and how easily it can be reused.

Style Used to Live With the Creator

It was built, not specified

Before models like this, style was something you accumulated over time. It came from repetition, from taste, from hundreds of small decisions that were difficult to articulate but easy to recognize. You could try to imitate someone else’s work, but reproducing it consistently was another matter entirely.

There was always friction between what you imagined and what you could execute. That gap is where a lot of authorship lived.

Tools helped you execute, not define

Software such as Adobe Photoshop expanded what skilled users could do, but it didn’t fundamentally relocate style. The tool responded to the user; it didn’t absorb or formalize their visual language.

So style remained embodied. It stayed with the person.

Now Style Moves Upstream

It becomes part of the instruction

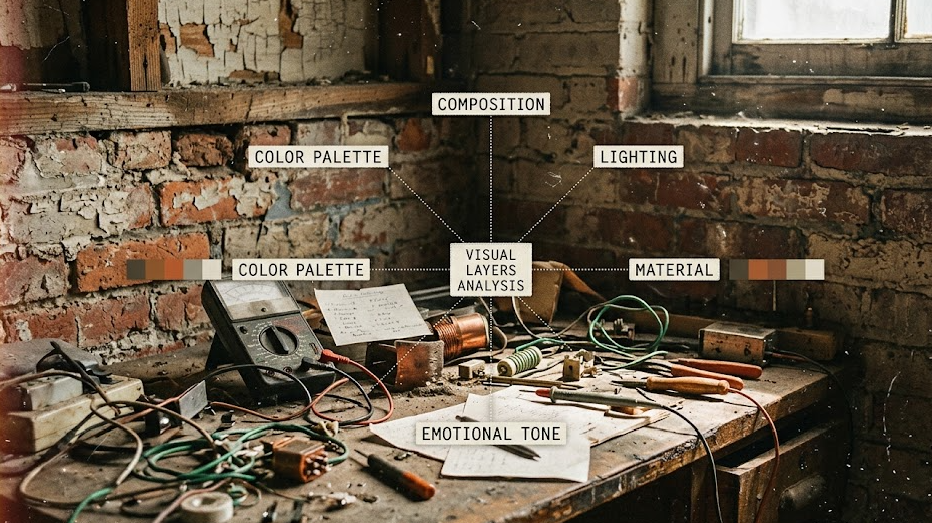

With GPT Image 2, that relationship starts to invert. Instead of discovering a style through making, you begin by describing it. The more precisely you can define lighting, composition, material, and tone, the closer the output aligns with your intent.

Style, in other words, is no longer just an outcome—it becomes part of the input.

It starts to behave like a system

Once you work this way for a while, something becomes clear. Style is no longer a single, indivisible quality; it breaks into components that can be adjusted independently. You might keep the composition but change the lighting, or preserve the mood while altering texture and color.

The process feels less like crafting something from scratch and more like assembling a set of controlled variables.

The Bottleneck Has Quietly Shifted

Execution is no longer the hard part

When a model can follow detailed instructions with reasonable consistency, technical execution stops being the main constraint. Most users can now generate something “good enough” with relatively little effort.

The difficulty moves upstream.

Clarity becomes the new constraint

What matters more is whether you know what you’re asking for. Vague prompts produce vague outputs; precise intent produces direction. That sounds obvious, but in practice it changes who stands out.

The advantage no longer lies with the person who can render an image, but with the person who can define it.

Real Usage Already Reflects This

The conversation has changed

If you look at how people discuss these models, the tone is noticeably different from earlier waves of image generation. Instead of reacting with pure amazement, users focus on control: whether the prompt was followed, whether the style holds across variations, and where the model starts to drift.

This is less about spectacle and more about reliability.

Failures reveal more than successes

Interestingly, the most revealing outputs are often the imperfect ones. Slight inconsistencies, broken details, or subtle mismatches between instruction and result expose where the system still struggles.

Those moments are not just glitches; they map the edges of what style can and cannot yet do.

Style Ownership Becomes Less Clear

From signature to reusable logic

When a style can be described with enough precision, it can also be reproduced by others. That doesn’t erase authorship, but it does complicate it.

Style begins to function less like a personal signature and more like a reusable logic.

What can be described can be shared

Unlike a signature, which is tied to a single person, a described style can be reused, modified, and scaled. It can move across teams, across projects, and across contexts without losing its core structure.

That changes how value is distributed. The creator still matters, but so does the person who knows how to apply, adapt, and refine the style in practice.

Taste Doesn’t Disappear — It Relocates

Selection becomes the real work

As generation becomes easier, decision-making becomes harder. Instead of struggling to produce one viable image, you are faced with many acceptable ones, each slightly different.

The question shifts from “Can I make this?” to “Which version actually works?”

Judgment becomes visible again

Taste now shows up in selection, in restraint, and in timing. It appears in knowing when to stop iterating, when something feels over-designed, or when a result lacks tension.

These are quieter skills, but they are harder to automate.

Creation Turns Into a Process

Not a single act, but a loop

The workflow is no longer linear. It cycles through intent, prompting, variation, selection, and refinement. Each step informs the next, and the final result emerges from that loop rather than from a single decisive moment.

What Actually Becomes Rare

Not style, but direction

As tools like Midjourney and GPT Image 2 make style easier to access, the scarcity shifts elsewhere. Style itself becomes more available, more flexible, and less tied to any one individual.

What remains difficult is direction—knowing what to pursue, what to ignore, and what to finalize.

Closing Thought

Style is no longer only something you develop over time. It is increasingly something you can define, call upon, and reuse across contexts.

That shift does not remove creativity. It relocates it—away from execution and toward judgment, structure, and intent.

Go to WeShop AI For Exploration: