I did not expect to stop scrolling.

For the past few weeks, most conversations around GPT Image 2 have sounded familiar: job anxiety, copyright concerns, image misuse, and the sense that AI is moving faster than people can emotionally process. That story is real. It deserves to be taken seriously. But it is also incomplete.

Because somewhere in the middle of all that noise, another kind of image started appearing in the feed.

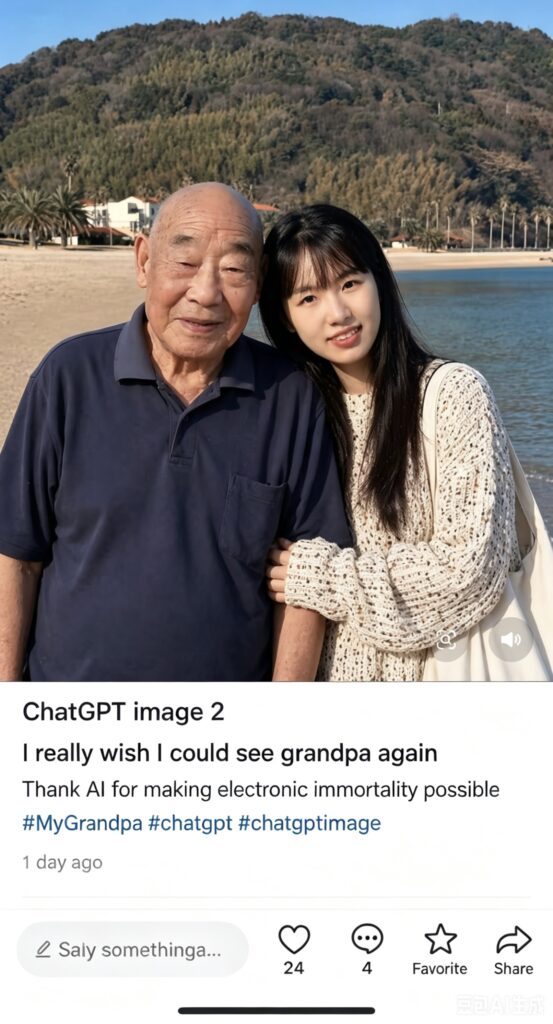

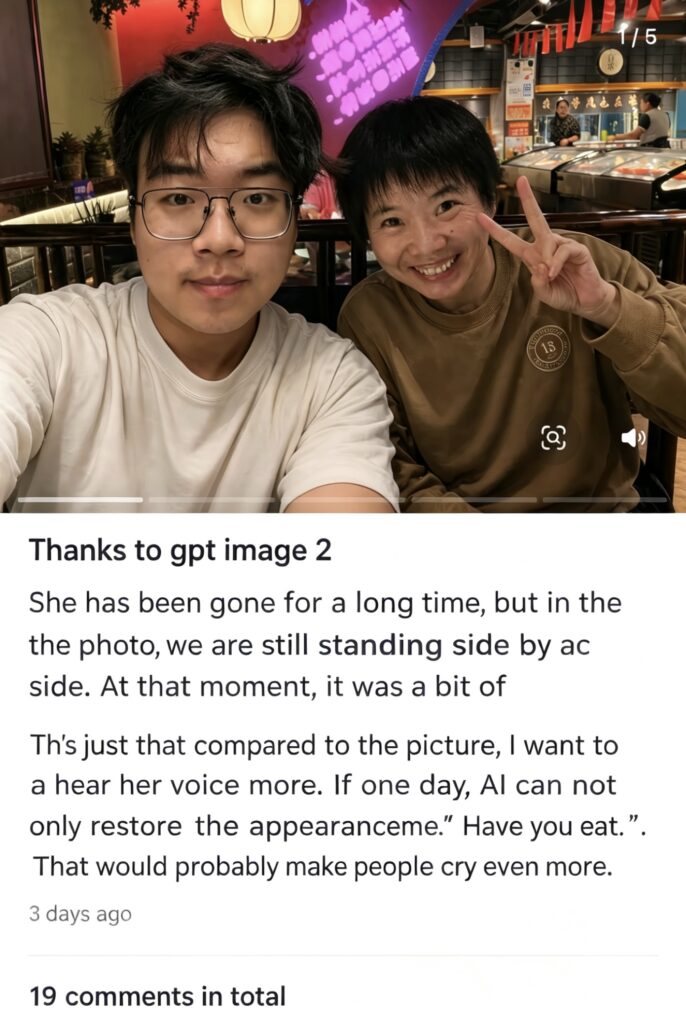

A young person standing beside a grandfather who passed away years ago. A woman “meeting” her grandmother for the first time through a generated photo. A restored family portrait that had been damaged, faded, or almost lost.

Under one of those posts, the caption was simple: “This photo is made with love.”

It was not a technical flex. There was no talk of prompts, resolution, or model performance. It felt more like a wish than a request:

“Let me stand next to them.”

That is what stayed with me.

When photos stop being proof

We used to think of photographs as evidence.

Something happened. The camera caught it. The image proved it.

That was the basic contract of photography: a photo pointed backward toward a real moment in time.

But GPT Image 2 complicates that contract. It can now generate scenes that never took place, restore scenes that barely survived, and rebuild faces that memory has almost erased. These images are not records in the traditional sense. They are reconstructions. Sometimes they are even imaginations of what we wish had happened.

Oddly enough, that does not seem to bother many of the people making them.

What they want is not factual certainty. They already know the image is generated. They know the reunion did not occur in the physical world. What they are searching for is something else: emotional completion.

From a communication perspective, that shift matters. When the cost of generating an image becomes almost nothing, the image itself becomes less precious. What becomes precious is the intention behind it.

Why this image? Why this person? Why now?

Those questions tell us far more than the pixels do.

Grief has always looked for a medium

There is something deeply human about trying to give shape to absence.

People write letters to the dead. They keep voice messages they never delete. They return to old photos, even when the faces in them are blurry or incomplete. Grief has never been purely private; it always looks for some form of expression.

That is why these generated images feel so powerful. They are not just visual outputs. They are containers for longing.

A blurry photograph is never only about blur. A missing face is never only about missing detail. Over time, memory itself frays. Names become harder to say. Stories lose their edges. A person who once shaped an entire family can slowly become a faint outline.

That is where GPT Image 2 enters the story—not as a perfect solution, and not as a substitute for presence, but as a kind of memory repair tool.

It does not bring anyone back. It does not undo loss. But it can give form to a feeling many people have carried silently for years:

I wish I had one picture with them.

Or even more simply:

I wish I had seen them this way.

One creator wrote after sharing a generated photo with her late grandmother: “AI exists so I can finally have a photo with you.”

It is hard to read a sentence like that as just “content.” It reads more like a confession.

Fake image, real tears

Of course, there is an ethical question here.

If an image never existed, what does it mean to feel moved by it? If the scene is synthetic, are we blurring the line between truth and fabrication too easily?

That concern is valid.

I do not think we should brush it aside. Once generated images become easy to make, they can also be easy to weaponize. People can use them to deceive, distort, or manipulate. The same technology that helps someone imagine a reunion can also be used to create harm.

That tension is exactly why this topic matters.

But it is also why we should be careful not to reduce the technology to only one story.

What I saw in these posts was not confusion. It was clarity.

People knew the images were generated. Some labeled them clearly. Others discussed the process openly. And still they cried.

That tells us something important: the emotional response was not based on deception. It was based on recognition.

Not factual truth. But emotional truth.

The image was “fake” in a literal sense, yet the feeling it carried was real. And in grief, that difference matters less than we often assume.

Technology, for once, moving backward

We usually describe AI as a force that pushes forward: faster production, higher efficiency, automation, scale.

That story is not wrong. But it misses something.

In this case, the technology is doing something almost opposite. It is moving backward.

Back toward a moment that never happened. Back toward a person you never got to meet. Back toward a version of life that only survives as regret.

That is what makes this use case feel so different from the usual AI conversation. It is not about replacing labor. It is about repairing absence.

I came across one comment that I could not stop thinking about:

“I do not even care about the image. I just wish I could hear her voice.”

That line says everything.

First came the image. Then came the possibility of voice. And after that, maybe something even more immersive—something that can hold not just appearance, but presence.

This is where the conversation around GPT Image 2 becomes bigger than image generation. It becomes part of a broader question about what humans ask technology to preserve when time has already taken too much.

Maybe this is what human-centered AI looks like

It is easy to categorize GPT Image 2 as another efficiency tool.

It can replace certain kinds of work. It can compress creative workflows. It can intensify anxiety about what comes next.

All of that is true.

But it is not the whole story.

Because at the same time, people are using the very same tool for something deeply inefficient, deeply unscalable, and deeply human:

They are trying to repair something that cannot actually be repaired.

They are trying to hold on a little longer. They are trying to make memory visible. They are trying to give shape to love after loss.

And for a brief moment, it feels like the machine helps.

Not because it brings anyone back. Not because it restores the past perfectly. But because it gives grief a face.

That may be the most unexpected thing about AI right now. Not that it can imitate style, or accelerate production, or produce endlessly polished content. We already expected that. The surprise is that it can also be used for care.

For remembrance. For ritual. For the small and fragile human need to say: I was here with you, even if only in the image.

Closing thought

If the first half of GPT Image 2’s public life belongs to efficiency and anxiety, its quieter second half belongs to memory and tenderness.

That does not erase the risks. It does not remove the need for ethics, consent, or caution.

But it does complicate the story.

Not all AI feels cold. Some of it is being used to rebuild what time, loss, and silence have taken away.

And maybe that is why these images linger. Not because they are perfect. Not because they are true in the old photographic sense. But because, for a moment, they let people stand beside someone they still miss.

Today’s question: If you could use AI to recreate one moment you never had, what would it be? A small afternoon, a familiar room, a face you never got to see clearly?

Sometimes that is enough to make a story feel human again.

Go to WeShop AI For Exploration: