A shopper bought a linen blazer online last week. Before clicking “add to cart,” she did something that would have been science fiction three years ago: she pasted the product URL into ChatGPT and asked it to “show me what this looks like on a 5’4″ woman with an athletic build.” The result wasn’t perfect — the lapel angle was slightly off, the linen texture was smoothed — but it was close enough to confirm her purchase decision. She kept the blazer. No return. No wasted shipping. No landfill contribution.

Left: Garment flat-lay | Right: AI-generated try-on result

The Convergence: How Conversational AI and Image Generation Merged Into Virtual Fitting

The most significant shift in AI virtual try-on isn’t happening in dedicated fashion-tech startups. It’s happening at the intersection of large language models (LLMs) and image generation. When a user asks ChatGPT to “show me this outfit on someone my size,” the system orchestrates a complex pipeline: it parses the natural-language request, extracts body parameters, identifies the garment from the provided image or URL, selects an appropriate generation model, and synthesizes the result.

This conversational interface fundamentally changes who can access virtual try-on technology. Previously, you needed to navigate a specialized app, upload images in a specific format, and understand parameters like “pose” and “background.” Now, you describe what you want in plain English. The AI handles the technical translation.

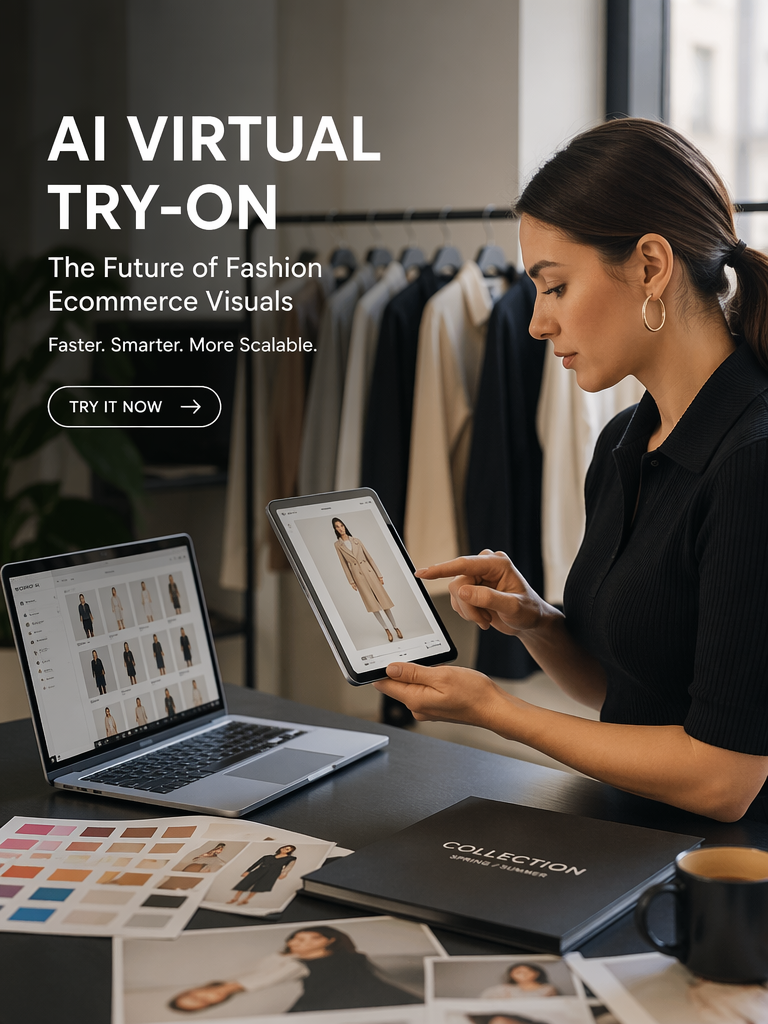

For sellers, this opens a powerful workflow: describe the shot you want in natural language, and the AI generates it. “Show this red dress on an East Asian model walking through a Tokyo street at sunset.” “Put this suit on a tall, athletic man in a minimalist office.” The creative brief becomes the production tool.

The Science Behind Multi-Modal Virtual Try-On: From Text Prompts to Pixel-Perfect Garments

Modern virtual try-on systems combine three distinct AI architectures, each handling a different modality of the input.

The Vision Encoder: Seeing the Garment

A vision transformer (ViT) processes the garment image, extracting a hierarchical feature representation that captures both global structure (silhouette, category) and local details (button placement, seam lines, fabric texture). The encoder is typically pre-trained on millions of fashion images, giving it domain-specific understanding that generic image models lack — it knows that a lapel folds differently from a collar, that denim creases differently from silk.

The Language Decoder: Understanding the Intent

An LLM processes the user’s text prompt, extracting structured parameters: target body type, pose description, environmental context, and any specific styling instructions. The critical innovation is grounded generation — the language model doesn’t just generate text; it produces a structured representation that directly conditions the image generation pipeline. “Athletic build” maps to specific body mesh parameters. “Tokyo street at sunset” maps to lighting direction and color temperature values.

The Diffusion Synthesizer: Building the Image

A conditioned latent diffusion model receives both the visual features (garment) and the textual conditioning (body + scene) and iteratively denoises a latent representation into the final image. The garment features act as an “anchor” — constraining the diffusion process to preserve fabric color, texture, and structural details while allowing the model creative freedom in how the garment drapes, folds, and interacts with the body and environment.

Technical Frontier: Iterative Refinement via Conversational Feedback

The most exciting development is iterative refinement. Rather than accepting the first output, users can provide feedback: “Make the sleeves slightly shorter,” “Change the background to white,” “Try a more relaxed pose.” Each instruction fine-tunes the generation without starting from scratch. This conversational loop produces dramatically better results than single-shot generation, because the user effectively serves as a real-time quality control system.

Engineering Challenges: Latency and Consistency

Current multi-modal pipelines take 10-30 seconds per generation — acceptable for pre-purchase decision-making but too slow for real-time browsing. Reducing this to sub-second inference requires architectural innovations in latent space compression and speculative decoding that are actively being researched. The consistency problem also compounds in conversational settings: each refinement iteration can drift from the original garment, requiring explicit anchoring mechanisms.

The Practical Guide: Using AI Virtual Try-On for Smarter Online Shopping

For individual consumers, AI virtual try-on is already a powerful shopping assistant — if you know how to use it effectively.

The precision in how the blouse fabric catches light at the shoulder — that translucent quality that’s nearly impossible to judge from a flat-lay photo alone. This is what makes AI try-on valuable for purchase decisions: it reveals how fabric behaves on a body, not just how it looks on a table.

Tip 1: Provide Specific Body Descriptions

Don’t just say “try it on me.” Specify: “5’6″, size 8, pear-shaped, warm skin tone.” The more precise your description, the more useful the output. Some tools accept body measurements directly; others work better with descriptive language.

Tip 2: Request Multiple Angles

A single front-facing shot tells you about the neckline but nothing about the back drape. Ask for front, side, and back views. Each generation takes seconds; the information gain is enormous.

Tip 3: Test Edge Cases

What does this dress look like when you sit down? When you raise your arms? These are the questions that cause returns. AI can approximate them — the drape won’t be physically perfect, but it’ll flag obvious problems like a too-short hemline or restrictive shoulders.

Actionable Scene Guide: AI Try-On for Different Shopping Scenarios

Workwear and Suiting

Specify your typical office lighting (fluorescent vs. natural). Request a seated pose — suits look different in a desk chair than standing. Include accessories (watch, glasses) in your description for a more realistic preview.

Evening and Occasion Wear

Request low-light/evening ambiance. Movement poses (walking, turning) show how formal fabrics drape dynamically. If the garment has embellishment (sequins, beading), note that AI may over-smooth these details — factor in that the real garment will have more texture.

Swimwear and Intimates

These categories demand the highest body-accuracy because fit is everything. Use tools that accept measurements rather than descriptive prompts. Request both front and side views. Be aware that stretch fabrics are rendered less accurately than woven ones.

Expert Consulting FAQ

Q1: Is AI virtual try-on accurate enough to eliminate returns?

Not eliminate, but significantly reduce. Early data from retailers using AI try-on shows a 20-35% reduction in return rates for categories where the tool is offered. The biggest impact is on “style fit” — whether the garment suits the customer’s aesthetic — rather than “size fit,” which still requires accurate measurement data.

Q2: Can I use ChatGPT specifically for virtual try-on, or do I need a dedicated tool?

ChatGPT with GPT-4V can generate rough visualizations, but dedicated virtual try-on tools produce significantly better garment fidelity. Use ChatGPT for quick “should I buy this?” checks and dedicated tools when you need production-quality imagery.

Q3: How do I know if an AI try-on result is trustworthy?

Look for three signals: (1) garment color matches the product listing, (2) fabric texture is visible (not smoothed to plastic), (3) body proportions look natural. If any of these are off, the result may be misleading. Treat AI try-on as an informed preview, not a guarantee.

Q4: Will AI try-on work for vintage or one-of-a-kind garments?

Yes, and this is actually one of its strongest use cases. Vintage sellers typically have one sample and can’t do extensive photography. A single well-lit photo can generate multiple model shots, making vintage listings significantly more appealing.

Q5: What privacy concerns should I have about uploading my photos for AI try-on?

Legitimate tools process your image for generation and don’t store it long-term. However, always check the privacy policy. Prefer tools that process locally or guarantee data deletion. Never upload identifiable photos to unverified services.