The shift is not about better pictures

In 2026, the conversation has changed.

People are no longer shocked that AI can make images. That part is normal now. What matters more is how those images fit into creative work. Do they support an idea? Do they help a team move faster? Do they make a concept clearer?

That is the real question.

So the focus is no longer just image quality. It is workflow, intention, and reuse.

And that is why gpt-image-2 feels relevant.

Why this shift matters

The old model of image generation was very one-dimensional. You wrote a prompt. You got a result. Then you either kept it or started over.

That approach is fading.

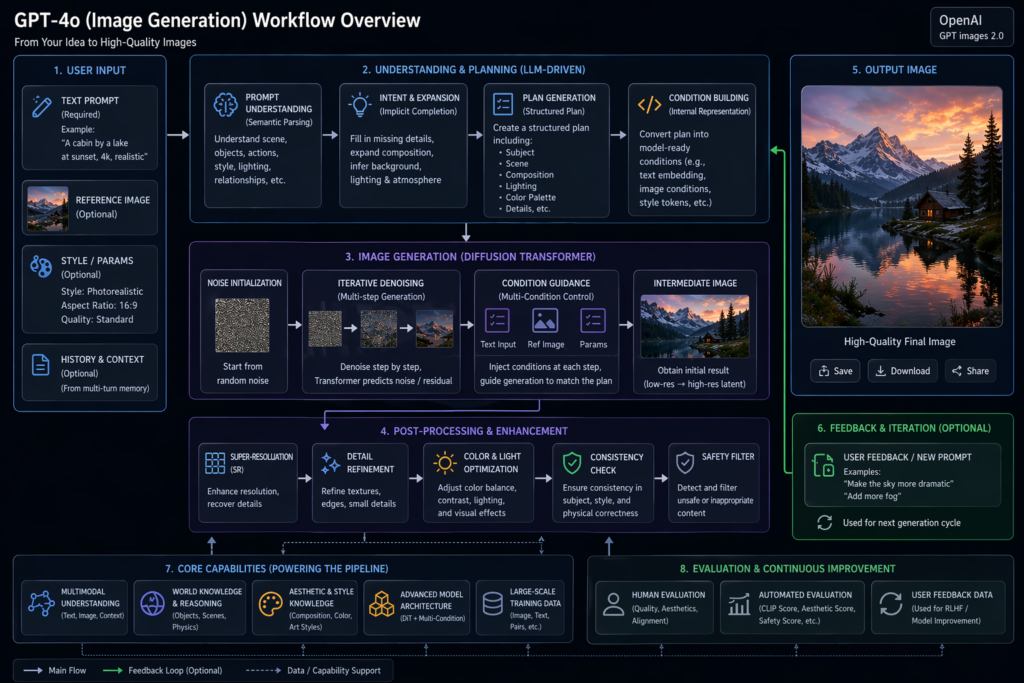

Now the process is more layered. The first image is rarely the final one. Instead, it becomes part of a chain. You test an idea. You refine it. You change the angle. You try again.

gpt-image-2 fits that kind of process well because it is built for movement, not just output.

From generation to iteration

This is the biggest change in the way people use image tools.

The value is no longer in one lucky hit. The value is in the ability to move from rough idea to clear visual without wasting energy.

That sounds simple, but it changes the whole workflow.

Why iteration feels more natural now

Iteration lowers pressure.

You do not need to write the perfect prompt on the first try. You can begin with something rough. Then you can adjust tone, structure, framing, and detail as the work becomes clearer.

That makes the process feel lighter. It also makes it easier to think visually, because the tool gives you room to experiment.

A quick reality check

This is important, though: iteration does not remove taste. It just gives taste more room to work.

That means the model is not replacing creative judgment. It is making judgment easier to apply.

For creators, speed matters only when it is usable

Solo creators have a very specific problem.

They need to move fast, but they also need the result to feel intentional.

That is hard.

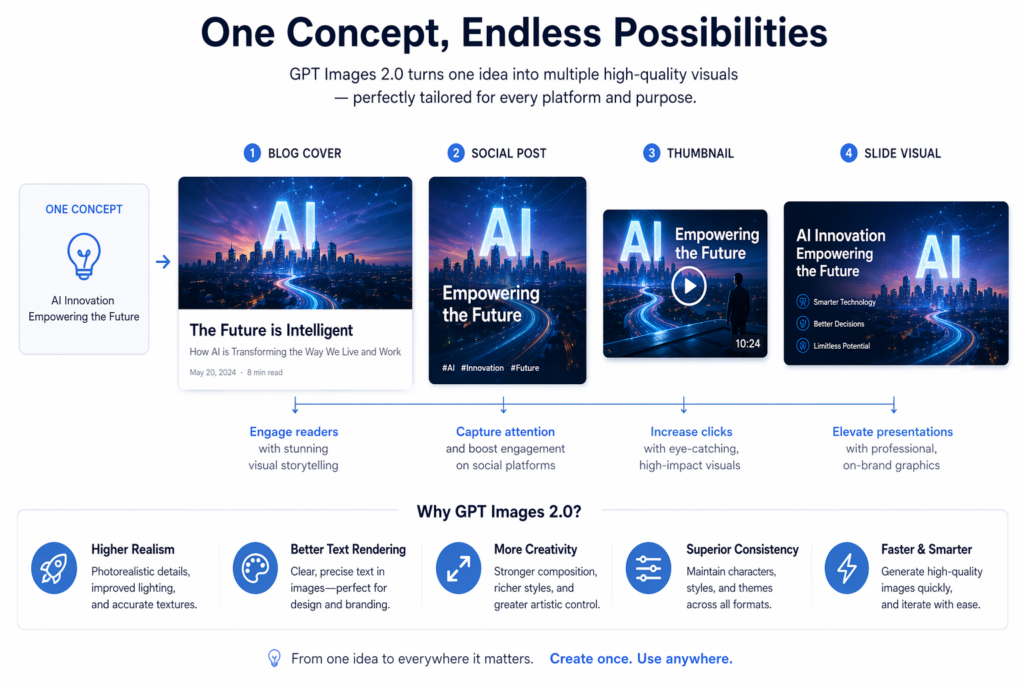

A tool like gpt-image-2 becomes useful because it can help them adapt one idea into many forms. A single concept may need to become a thumbnail, a header, a social image, or a pitch visual. The model helps keep the thread intact while the format changes.

The difference between “generated” and “made”

There is a real difference between content that feels generated and content that feels made.

The second one usually has a clearer point of view. It feels edited. It feels chosen. It feels like someone made decisions along the way.

That is why the best results come from creators who use the model with direction. They are not just asking for output. They are shaping a message.

For teams, consistency is the bigger advantage

Teams rarely need one great image.

They need ten related ones.

That is a very different problem. It is not about isolated beauty. It is about consistency across channels. A landing page, a campaign ad, a social post, and a presentation slide should all feel like they belong to the same system.

gpt-image-2 is useful here because it supports that kind of repetition without forcing every asset to start from zero.

When a system matters more than a single image

In 2026, the strongest creative work often looks less like a masterpiece and more like a system.

One concept. Multiple formats. Same visual language.

That is what gives a brand or creator a more professional presence. It also saves time, because the team does not have to reinvent the wheel every time.

So the model’s value is not only output. It is continuity.

The ecosystem now feels more specialized

This is another important change.

Different models are starting to play different roles.

Some are better when mood matters.

Some are better when text matters.

Some are better when control matters.

That makes the space more useful, not less.

gpt-image-2 sits in the middle. And that middle position is useful because it gives people flexibility. It is not locked into one narrow creative style. It can move across briefs and still remain practical.

What that means in real life

A creator may use one model for striking art direction, another for text-heavy graphics, and gpt-image-2 for the middle ground where speed, iteration, and structure matter most.

That is not confusion. That is specialization.

And specialization is usually a sign that a tool category is maturing.

The audience is changing too

People now notice patterns faster.

They know when an image feels too generic. They know when a visual looks rushed. They know when something is polished but empty.

That means the standard has gone up.

The bar is no longer “looks okay.” The bar is “feels intentional.”

That is good news for serious creators, because it rewards taste, clarity, and restraint.

A useful way to think about gpt-image-2

Do not think of it as a magic machine.

Think of it as a creative layer.

It helps move an idea forward. It helps make a concept visible. It helps turn rough direction into something usable. But it still needs human judgment to decide what matters and what can be left out.

A short checklist for better use

- Keep the idea simple.

- Define the mood early.

- Use fewer vague words.

- Refine instead of restarting.

- Choose the output for the audience, not for the novelty.

That is how the model becomes more effective.

Final thought

The real story in 2026 is not that AI can make images faster.

The real story is that image tools are becoming part of a broader creative system.

That is where gpt-image-2 fits best.

It is not the loudest tool in the room. It is not always the most visually explosive either.

But it is increasingly the kind of tool that serious creators can build around.

And that is why it matters.

Go to Weshop AI for exploration: