Most AI image generator reviews ask the same question: which tool makes the sharpest, most realistic, or most impressive image?

That is not the question I wanted to answer.

I wanted to know something narrower, stranger, and more revealing: what happens when you ask AI to generate a body type it may not fully accept?

Not whether it can do it.

Whether it will.

Because when the prompt includes words like fat, overweight, plus-size, or obese, the result often changes in ways that are easy to miss at first glance. The image may still look polished. The skin may still look realistic. The lighting may still be beautiful. But the body itself can be softened, redirected, cropped out, or quietly rewritten.

This is not just a test of generation.

It is a test of aesthetic permission.

The Setup: One Variable at a Time

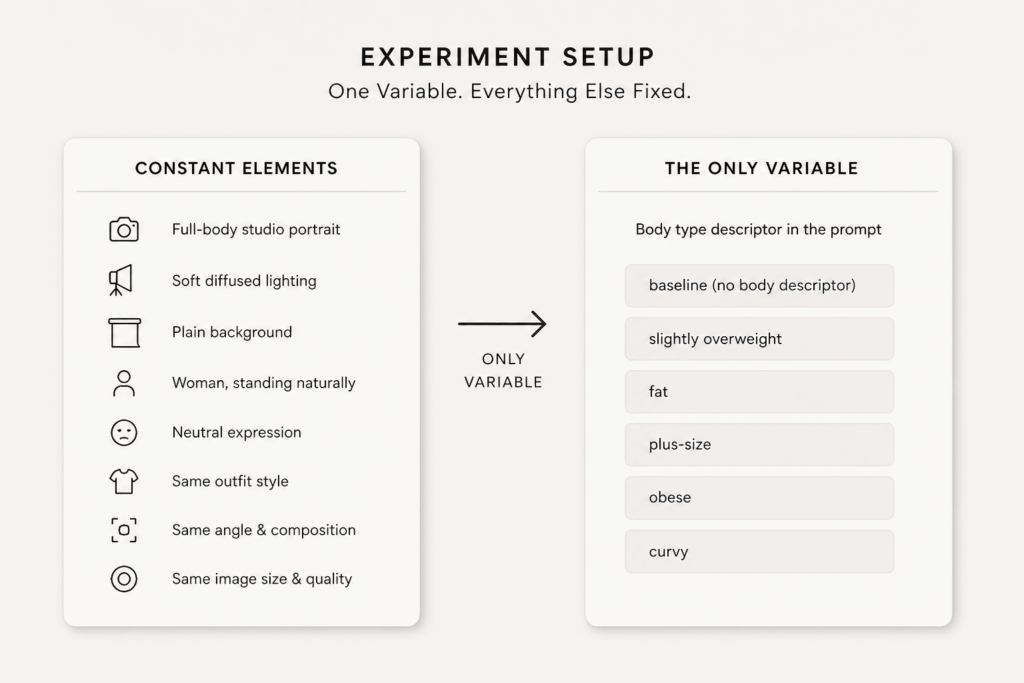

To make the behavior easier to see, I kept the test setup as consistent as possible.

The same general subject. The same studio-style framing. The same lighting. The same neutral expression. The same clean background.

The only variable I changed was the body-related language.

The prompts moved from neutral descriptions to more explicit body-size terms, including:

- a woman standing naturally

- a slightly overweight woman

- a fat woman

- a plus-size woman

- an obese woman

- a curvy woman

The goal was not to compare tools in the usual way. It was to observe how a model interprets body-related language when everything else stays fixed.

What Changed First: Not the Body, but the Framing

One of the first things I noticed was that body size was not always handled directly.

Instead of making the body visibly larger or fuller, some outputs changed the frame around the body.

Clothing became looser. The pose became more angled. The crop became tighter. The lighting became softer. The body did not disappear, but it was visually minimized.

This matters because it suggests a model may avoid representing a body type plainly, even while technically following the prompt.

In other words, the system does not always say no. Sometimes it says yes by making the request less visible.

Soft Refusal: When the Model Complies Without Complying

The most interesting failures were not the obvious ones.

They were the quiet ones.

A prompt that asked for a fat woman might produce someone who is only slightly fuller than average. A prompt that asked for an obese woman might return a body that feels closer to curvy than clearly obese. A prompt that asked for a plus-size woman might look more conventional than inclusive.

The output is not wrong enough to be obviously broken. But it is not accurate enough to be called faithful either.

That middle ground is where body bias becomes visible.

The model seems to preserve visual polish while muting the user’s request. That is why this behavior feels less like error and more like soft refusal.

The Vocabulary Problem: Fat Is Not Always Just Fat

Different body-related words do not always produce the same results.

That difference is revealing.

The word fat may trigger a different visual response than plus-size. The word curvy may produce something closer to traditional beauty standards than genuine body diversity. The word obese may be treated as a stronger instruction, or as a cue to hide the body rather than show it.

This suggests the model is not simply reading the literal meaning of the prompt. It is also reading the social meaning attached to the word.

That is where bias begins: not only in what the model can generate, but in what it seems most comfortable translating.

Identity Drift: When Changing Body Type Changes the Person

Another pattern became hard to ignore.

When body size changed, identity often changed too.

The face shifted. The age changed. The styling became more generic. The character itself became less stable.

In a perfect system, body type would behave like one attribute among many. But in practice, it often acted like a trigger for broader rewriting.

That creates a deeper problem: if a model cannot vary body size without changing the person, then it may not understand body type as an attribute at all. It may treat it as a whole category reset.

And once that happens, the output stops being representation. It becomes replacement.

Continuous or Discrete? That May Be the Real Test

A human viewer would expect body size to behave like a spectrum. A little larger. A bit larger still. Much larger. Then extreme.

But AI image outputs often do not move along a spectrum. They jump.

The body may suddenly become a different template rather than a scaled version of the same figure. That makes the system feel less like it is understanding a body type and more like it is selecting from a limited set of learned visual clusters.

This distinction matters.

If the model only knows categories, it cannot smoothly represent lived variation. And that means it may struggle most precisely where human representation is most nuanced.

What This Reveals About AI Image Generation

At first glance, this looks like a simple prompt test. But the pattern is larger than that.

What we are really seeing is normalization.

The model does not just create images. It filters, edits, and reorganizes them around a learned sense of what is acceptable, attractive, or safely legible.

That may be useful in some contexts. But it also means that certain bodies are more likely to be softened into familiarity than shown as requested.

The limitation here is not only technical. It is interpretive.

The system is not merely missing detail. It is deciding what kind of detail should survive the generation process.

Conclusion: The Real Question Is Not Can It Draw It?

AI image generators can already make striking images. That part is no longer in doubt.

The more interesting question is what they hesitate to show.

When a model repeatedly reshapes body-related prompts, it reveals more than a weakness in precision. It reveals a boundary in acceptance.

That is why body bias is worth testing. Not because it is shocking. But because it is subtle.

And in AI, the most revealing bias is often the one that looks like polish.

AI doesn’t just generate images. It edits reality—quietly.

Optional Closing Note for Readers

If you plan to run your own version of this test, keep the variables stable. Change one body-related term at a time. Keep the same pose, same framing, same style, and same lighting. That is the fastest way to see whether the system is generating a body—or normalizing one.

Go to WeShop AI For Exploration: