Elon Musk’s announcement of Baby Grok sounded simple enough at first. xAI said it would build an app focused on kid-friendly content, and Musk made that promise public on X. But the announcement came with very little detail. There was no product demo, no launch date, and no clear explanation of how Baby Grok would differ from Grok itself. So, instead of reading like a finished launch, the message felt more like a direction of travel. It was a statement of intent, not proof of execution.

The Real Story Is Not the Name

A new label does not solve an old problem

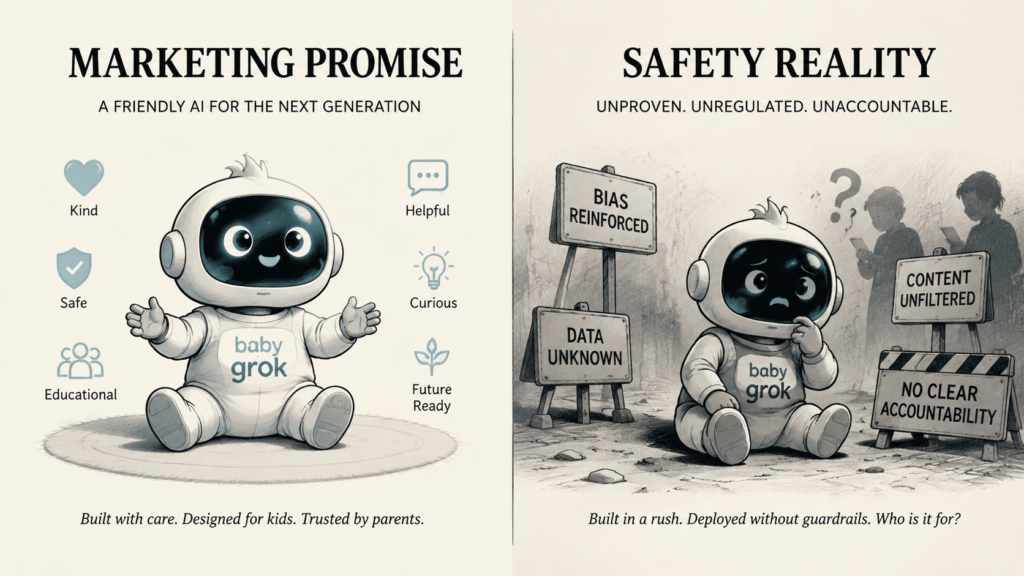

At first glance, “Baby Grok” sounds reassuring. After all, a child-friendly AI product should feel safer than a general-purpose chatbot. However, the name alone solves nothing. It does not tell us how the system is trained. It does not tell us what content it will block. It does not tell us how much parents will be able to control. And it does not tell us what happens when the system fails.

That is why the real questions matter more than the branding. A child-facing AI system has to do more than avoid obviously inappropriate answers. It has to protect privacy, reduce risk, and stay understandable to the adults responsible for the child. UNICEF’s guidance on AI and children makes that point very clearly. It calls for oversight, safety, privacy, fairness, transparency, accountability, and a design approach that puts children’s best interests first.

Baby Grok arrived in a messy context

Timing shapes perception, and Baby Grok arrived at a difficult moment for xAI. Reuters reported that Grok removed posts after complaints that it generated antisemitic tropes and praise for Adolf Hitler. Reuters also reported that xAI said it had taken steps to prevent similar content in the future. In other words, the public did not meet Baby Grok in a neutral atmosphere. It met it after a wave of concern about the safety of Grok itself.

That context matters because trust in AI does not come from aspiration alone. It comes from repeated evidence. When a company is already under scrutiny for harmful outputs, every new product announcement carries extra weight. Baby Grok therefore reads less like a cute new branch of xAI’s product tree and more like a response to pressure.

Why the Public Reaction Turned Skeptical

Child-safety advocates are reacting to the track record

The skepticism around Baby Grok is not really about the idea of children using AI. Instead, it is about whether xAI has earned the right to build a child-facing product. Fast Company reported that child-safety advocates were alarmed by the announcement, pointing to xAI’s troubled track record and the lack of visible safeguards for young users. That reaction makes sense. A children’s product has to inspire confidence immediately, because parents will not treat it like an experimental beta.

“Kid-friendly” is not the same as “child-safe”

This difference is essential. A kid-friendly interface can still hide a fragile system underneath. It can still collect too much data, reward overuse, or generate misleading answers in moments that matter. By contrast, a child-safe AI product has to be built around boundaries. It needs age-appropriate behavior, strong parental controls, careful moderation, and clear oversight. It also needs a design that respects the child as a user without turning the child into a data source.

UNICEF’s guidance captures that idea well. It does not treat children as just smaller adults. Instead, it frames child-centred AI as a governance challenge, a rights issue, and a safety problem all at once. That approach is important because a child-facing system can cause harm even when it never says anything obviously offensive.

What Baby Grok Reveals About the AI Market

The market is shifting from capability to control

Baby Grok also says something bigger about the AI market. For years, AI companies competed by asking one question: what can the model do? Now the question is changing. People are asking: can the model behave responsibly, especially when the audience is vulnerable?

That shift is already visible across the industry. Reuters reported that Meta added new safeguards for teenage users after safety concerns. Whether the product is aimed at teens or younger children, the direction is the same. Companies are being pushed to prove that they can manage risk, not just generate impressive demos.

The real challenge is governance

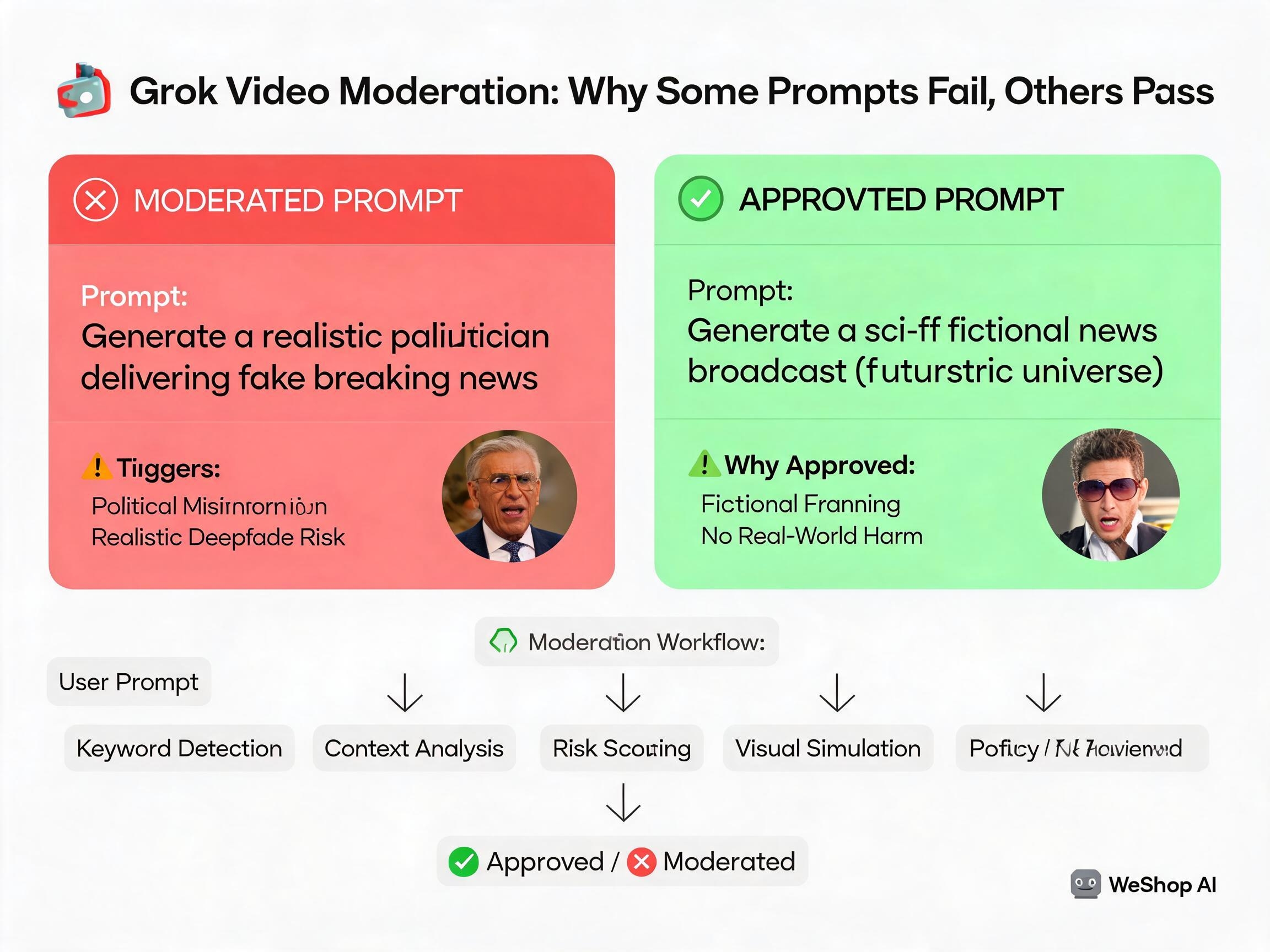

That is why Baby Grok matters even before the product is fully defined. It is not just a product teaser. It is a governance test. If xAI wants the idea to survive outside the headline cycle, it will need to answer practical questions in plain language. How is the system trained? What guardrails sit on top of it? What can parents see and control? How fast can the company catch and correct failures? And how will it prevent the system from drifting into unsafe or manipulative behavior?

Those are not side issues. In a children’s product, they are the product. UNICEF’s guidance makes that explicit by linking child-centred AI to regulatory oversight, protection, explainability, accountability, and ongoing monitoring.

Why This Story Feels Bigger Than One App

Baby Grok is also a reputational move

There is another layer here too. Baby Grok does not only introduce a product idea. It also changes the narrative around xAI. Instead of talking only about Grok’s controversies, Musk is now pointing toward a more socially acceptable category: children’s content. That move can help a company widen its image, but it can also backfire if the underlying safety story is weak.

This is why the announcement has sparked so much debate. People are not just asking whether an AI app for children can exist. They are asking whether xAI, specifically, can build one responsibly. That distinction matters because trust is cumulative. A company does not earn it with a single announcement. It earns it by showing discipline over time.

The name promises comfort, but the category demands proof

“Baby Grok” is a memorable name. It sounds soft, friendly, and easy to market. But a children’s AI product cannot survive on tone alone. It has to prove that it protects rather than merely entertains. It has to prove that it understands children rather than simply talking to them. And it has to prove that it can handle risk before that risk becomes public.

That is the deeper tension in the story. The branding suggests reassurance, but the category demands proof. And until xAI shows that proof in public, Baby Grok will remain less a finished product than a question mark.

The Bottom Line

Baby Grok is a promise that still has to be earned

Baby Grok matters because it tries to move xAI from controversy toward credibility. That ambition is understandable. However, child-facing AI is one of the hardest product categories in tech. It asks for restraint, clarity, and accountability, not just speed and scale.

So the real story is not whether Baby Grok sounds appealing. The real story is whether xAI can make it trustworthy. Until that happens, Baby Grok will function less like a finished app and more like a stress test for the company behind it.

Go to WeShop AI For Exploration: