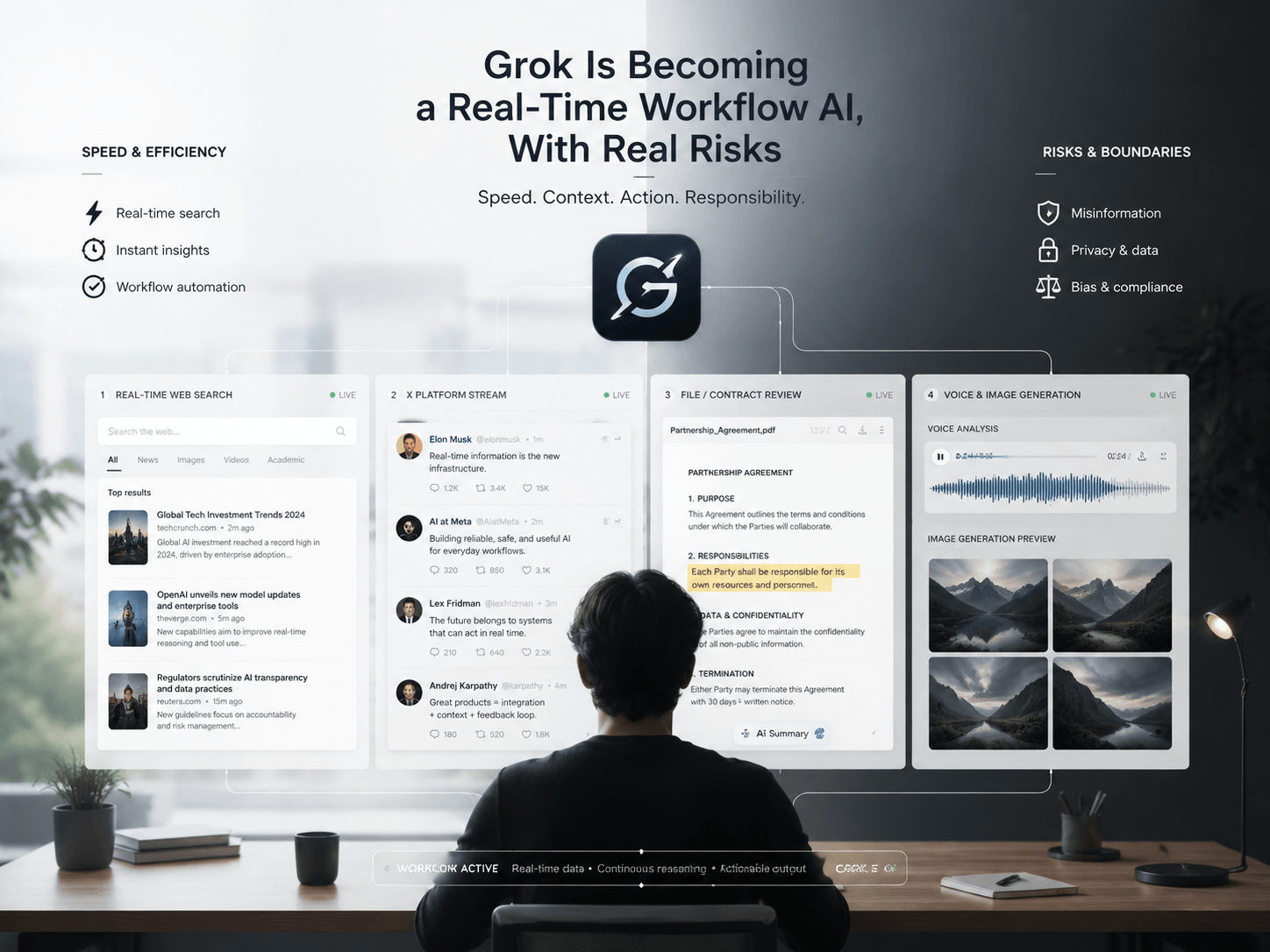

AI video generation is becoming dramatically more powerful. However, as tools like Grok evolve, users are noticing something frustrating—-Grok Video & Image Moderated:

Some videos get moderated instantly.

Meanwhile, other videos with very similar prompts go through without problems. That raises a surprisingly complicated question:

Why is Grok video moderated differently depending on the content?

At first glance, AI moderation may seem random. However, most moderation systems actually follow layered filtering processes designed to detect risk, context, and policy violations before content is generated or distributed.

The challenge is that modern AI moderation is no longer just checking keywords.

Instead, systems increasingly attempt to understand:

- intent

- visual meaning

- emotional context

- realism

- public safety risks

- internet culture signals

And honestly, that is where things become extremely complicated.

🚨 Why Users Suddenly Notice Grok Video Moderation More

Earlier AI systems were relatively limited. Most generated content looked obviously artificial. Now things feel different.

Modern AI video systems can create:

- realistic humans

- emotional dialogue

- cinematic scenes

- fake interviews

- AI influencers

- viral-style clips

- political content

As realism improves, platforms become much more cautious.

That is exactly why Grok video moderated discussions are growing rapidly online.

Users increasingly want to understand:

- Why did my prompt get blocked?

- Why was my video flagged?

- Why does one version pass while another fails?

- What triggers moderation systems?

The answer usually involves context.

⚡ The Real AI Moderation Process Behind Grok Video Moderated Content

Most people imagine moderation as a simple blacklist.

Reality is much more layered.

Modern AI moderation systems often analyze prompts in multiple stages.

A simplified workflow may look something like this:

User Prompt

↓

Keyword Detection

↓

Context Analysis

↓

Risk Scoring

↓

Visual Simulation Prediction

↓

Safety Policy Review

↓

Approved or ModeratedThis means Grok video moderation is often based on probability and interpretation rather than a single forbidden word.

🎥 Example: Why One Prompt Gets Moderated and Another Does Not

This is where things become interesting.

Consider these examples.

❌ Moderated Prompt

Generate a realistic politician delivering shocking fake breaking news footage.

Why this may trigger moderation:

- political misinformation risk

- realistic deception

- public manipulation concerns

- deepfake similarity

- potential viral misuse

Now compare that to this:

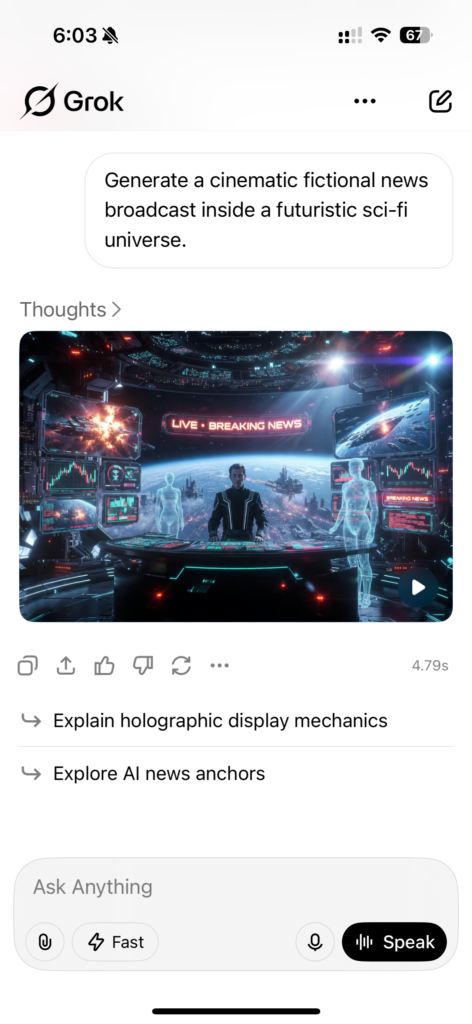

✅ Safer Prompt

Generate a cinematic fictional news broadcast inside a futuristic sci-fi universe.

This version changes several important signals:

- fictional framing

- reduced real-world harm

- stylized context

- lower misinformation risk

The topic looks similar.

However, the moderation outcome changes dramatically.

That is because AI systems increasingly analyze intent and realism together.

🧠 Why Context Matters More Than Keywords

One of the biggest misunderstandings around Grok video moderated content is the belief that moderation only scans banned words.

Modern systems are becoming far more contextual.

For example, AI moderation may evaluate:

- realism level

- public figures

- emotional intensity

- violence probability

- misinformation risk

- manipulation potential

- sexual content signals

- illegal activity implications

This creates situations where:

The same idea can be either approved or moderated depending on framing.

That is why wording matters so much.

🌐 Why Internet Culture Makes Moderation Much Harder

Internet culture evolves extremely fast. Memes, sarcasm, irony, and viral trends constantly shift meaning. This creates a huge challenge for AI moderation systems. Something harmless in one context may become controversial in another.

For example:

- satire can resemble misinformation

- memes can resemble harassment

- fictional edits can resemble real footage

- jokes can resemble threats

Because Grok is closely tied to online culture and social media environments, moderation becomes even more difficult. The AI must constantly distinguish between:

- humor

- manipulation

- creativity

- harmful deception

And honestly, humans struggle with this too.

🔥 Why AI Moderation Sometimes Feels Inconsistent

Many users complain that moderation feels random. In reality, moderation systems usually rely on dynamic risk models rather than fixed rules. That means:

- small wording changes matter

- realism changes matter

- tone changes matter

- framing changes matter

Even visual style can affect moderation outcomes. For instance:

Lower-Risk Style

- anime aesthetic

- stylized illustration

- fantasy environment

Higher-Risk Style

- ultra realistic humans

- documentary-style footage

- fake interviews

- realistic political clips

The closer AI gets to reality, the stricter moderation often becomes.

👀 The Hidden Goal Behind Grok Video Moderated Systems

Most AI moderation systems are trying to balance two competing goals:

| Goal | Challenge |

|---|---|

| Creative freedom | Avoiding harmful misuse |

| Realism | Preventing deception |

| Open expression | Platform safety |

| Viral engagement | Public trust |

This balance is incredibly difficult.

If moderation becomes too strict, creators become frustrated.

If moderation becomes too weak, platforms risk misinformation, abuse, and dangerous content spreading rapidly.

That tension is becoming one of the defining problems of modern AI platforms.

💥 Why Grok Video Moderation Matters Beyond Grok

This issue is much larger than one AI system. As AI-generated video becomes more realistic, every major platform will face similar moderation problems. The future internet may depend heavily on AI systems deciding:

- what gets amplified

- what gets restricted

- what gets flagged

- what gets removed

That gives moderation systems enormous influence over online culture. And honestly, most users probably do not fully realize how quickly this shift is happening.

📈 The Future of AI Video Moderation

Future moderation systems will likely become far more advanced. Instead of only reviewing prompts, AI may eventually analyze:

- generated visuals

- voice tone

- facial emotion

- audience reactions

- virality risk

- misinformation probability

Real-time moderation may become normal for:

- livestreams

- AI-generated influencers

- social media clips

- synthetic news content

That future is approaching surprisingly fast.

Final Thoughts

Grok video moderated content is not simply about blocking random prompts. Modern AI moderation systems increasingly attempt to evaluate realism, intent, emotional context, and public safety risks simultaneously. That is why some prompts succeed while others fail — even when they appear very similar on the surface. As AI video generation becomes more realistic, moderation systems will likely become even more powerful, complex, and controversial.

And honestly, this may become one of the most important internet debates of the next decade.

Go to WeShop AI For Exploration: