One of the biggest mistakes in AI image discussions is assuming every creator needs the same thing.

A meme-page operator, a fashion influencer, a startup founder, and a YouTube educator are not solving the same visual problem.

That is exactly why the “best AI image model” conversation has become fragmented.

If you are a fashion or beauty influencer

The public consensus still leans heavily toward Midjourney.

The reason is not technical accuracy. It is emotional presentation.

Midjourney consistently produces:

- cinematic lighting

- luxury-style compositions

- editorial framing

- dreamy color grading

- “expensive-looking” imagery

In r/midjourney, BB_InnovateDesign wrote:

“Nothing has surpassed Midjourney.”

Meanwhile, CryptographerCrazy61 said there are visual effects they “can’t get with any other model.” (reddit.com)

That kind of feedback appears constantly in creator communities. Even when people criticize Midjourney’s typography or workflow limitations, they still come back to its visual atmosphere.

For Instagram-heavy creators, aesthetic impact still matters enormously.

If you make educational content, carousel posts, or quote graphics

This is where GPT–image–2 and Ideogram become much stronger choices.

Creators increasingly care about:

- readable text

- accurate spelling

- layouts

- slide consistency

- infographic generation

- banner graphics

Historically, AI image models struggled badly with typography. That weakness alone made them unreliable for educational creators.

That is changing quickly.

In r/OpenAI discussions about GPT-image-2, biopticstream specifically praised its ability to output proper widescreen layouts, while other users highlighted cleaner text handling and better composition control. (reddit.com)

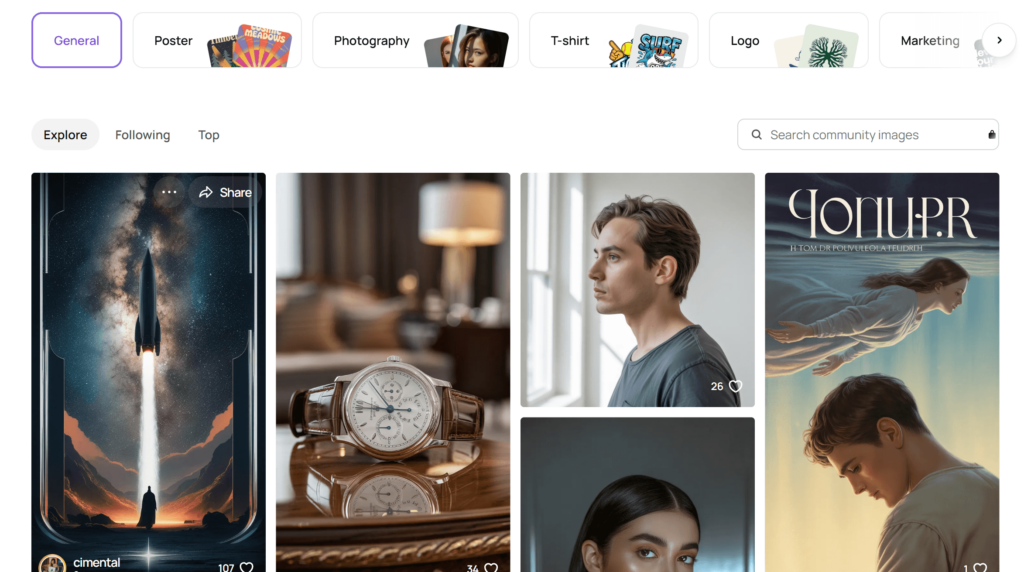

At the same time, Ideogram continues to dominate discussions about text rendering.

In r/ideogramai, fotogneric wrote:

“Ideogram is much better.”

And dergachoff summarized the difference perfectly:

“MJ is more artsy, Ideogram is more precise.” (reddit.com)

That single distinction explains why so many creators now use multiple models together.

Midjourney creates the emotional hook.

Ideogram makes the text readable.

GPT-image-2 assembles a usable final graphic.、

If you work with brands or clients

The priorities shift again.

At that point, creators care more about:

- consistency

- editability

- licensing confidence

- Photoshop integration

- scalable workflows

That is exactly where Adobe Firefly and Recraft become relevant.

Adobe keeps positioning Firefly around commercial-safe workflows and creative software integration. (adobe.com)

But public feedback still shows frustration around image quality.

In r/OpenAI, headhunter2637 said even “a simple logo” looked “horrible every time,” while geronimojito argued Firefly’s quality lags behind Midjourney and Gemini. (reddit.com)

That criticism matters.

Because right now, Adobe’s advantage is not necessarily image beauty. It is ecosystem trust.

For agencies and sponsored creators, that can still be enough.

Recraft sits in a similar category but with a more design-system-oriented philosophy.

Instead of trying to create viral fantasy imagery, Recraft focuses on:

- vector graphics

- reusable brand assets

- template consistency

- scalable social content systems

That makes it unusually attractive for:

- startup founders

- social media managers

- marketing creators

- personal brands

In r/StableDiffusion, EnvironmentalSeat486 even called Recraft V3:

“the best model”

for vector-style work. (reddit.com)

If you are a power user or AI-heavy creator

This is where FLUX and Stable Diffusion enter the conversation.

These models attract creators who care about:

- reference consistency

- deep editing

- workflow customization

- visual identity systems

- local generation

- advanced prompting

The language surrounding these models is noticeably different from mainstream AI tools.

People do not talk about “easy prompts.”

They talk about:

- checkpoints

- prompt adherence

- training

- anatomy

- control

- pipelines

In r/StableDiffusion, darkside1977 argued that FLUX has “better prompt comprehension,” while stddealer joked:

“At least it can do hands.” (reddit.com)

That sounds funny, but it reflects a very real shift in expectations.

For advanced creators, the conversation is no longer:

“Can AI generate images?”

It is:

“Which workflow gives me the most control?”

And that question increasingly matters for creators building recognizable visual brands.

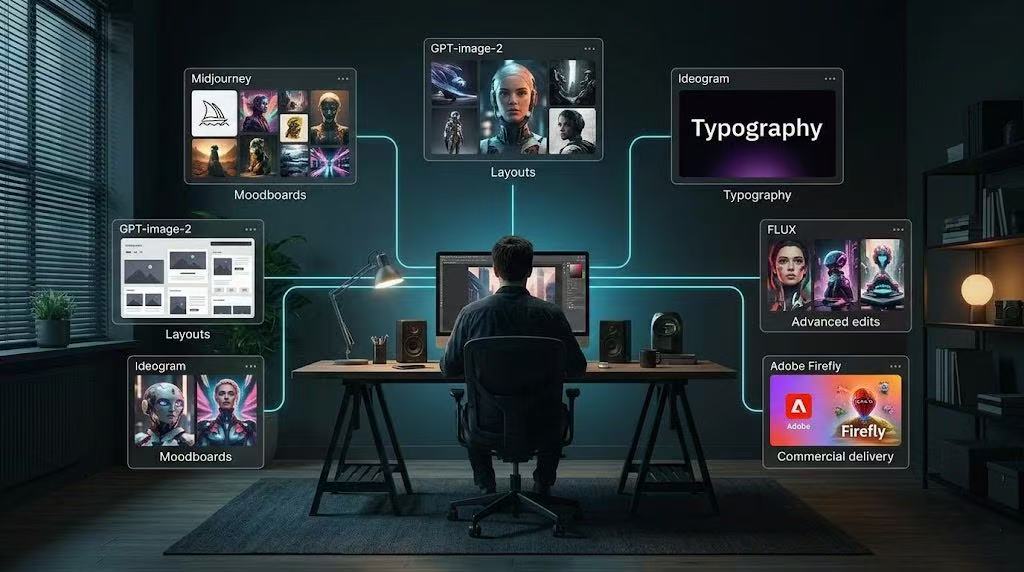

The Biggest Trend in 2026: Hybrid Workflows

The most interesting discovery from researching creator communities is that almost nobody serious relies on a single model anymore.

Instead, influencers are building layered workflows.

A creator might use:

- Midjourney for visual mood

- Ideogram for typography

- GPT-image-2 for final layouts

- Firefly for Photoshop edits

- FLUX for controlled variations

That modular workflow appears constantly across Reddit discussions, creator forums, and professional reviews.

The industry is slowly moving away from:

“What is the best model?”

And toward:

“What is the best combination?”

That shift may end up being more important than any single model release.

Final Verdict

If the question is:

“Which AI image model is best for social media influencers overall?”

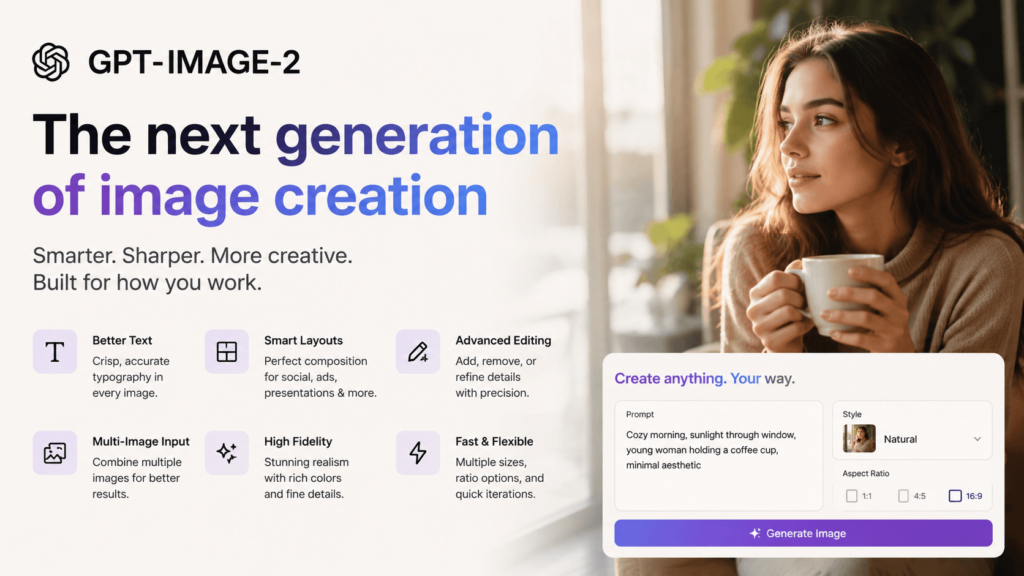

The most balanced answer right now is probably GPT-image-2.

Not because it always produces the prettiest image.

Not because it dominates every benchmark.

But because it understands the actual structure of modern content creation:

- text

- edits

- layouts

- banners

- consistency

- fast iteration

- publish-ready assets

Midjourney still dominates visual mood.

Ideogram still dominates typography.

FLUX and Stable Diffusion still dominate control.

But GPT-image-2 currently feels the closest to a true “social-media-native” image model.

And based on the public creator feedback across Reddit, review sites, and community discussions, that distinction is becoming more important every month.

Go to WeShop AI For Exploration: