Social media creators are moving into a new phase of AI image generation.

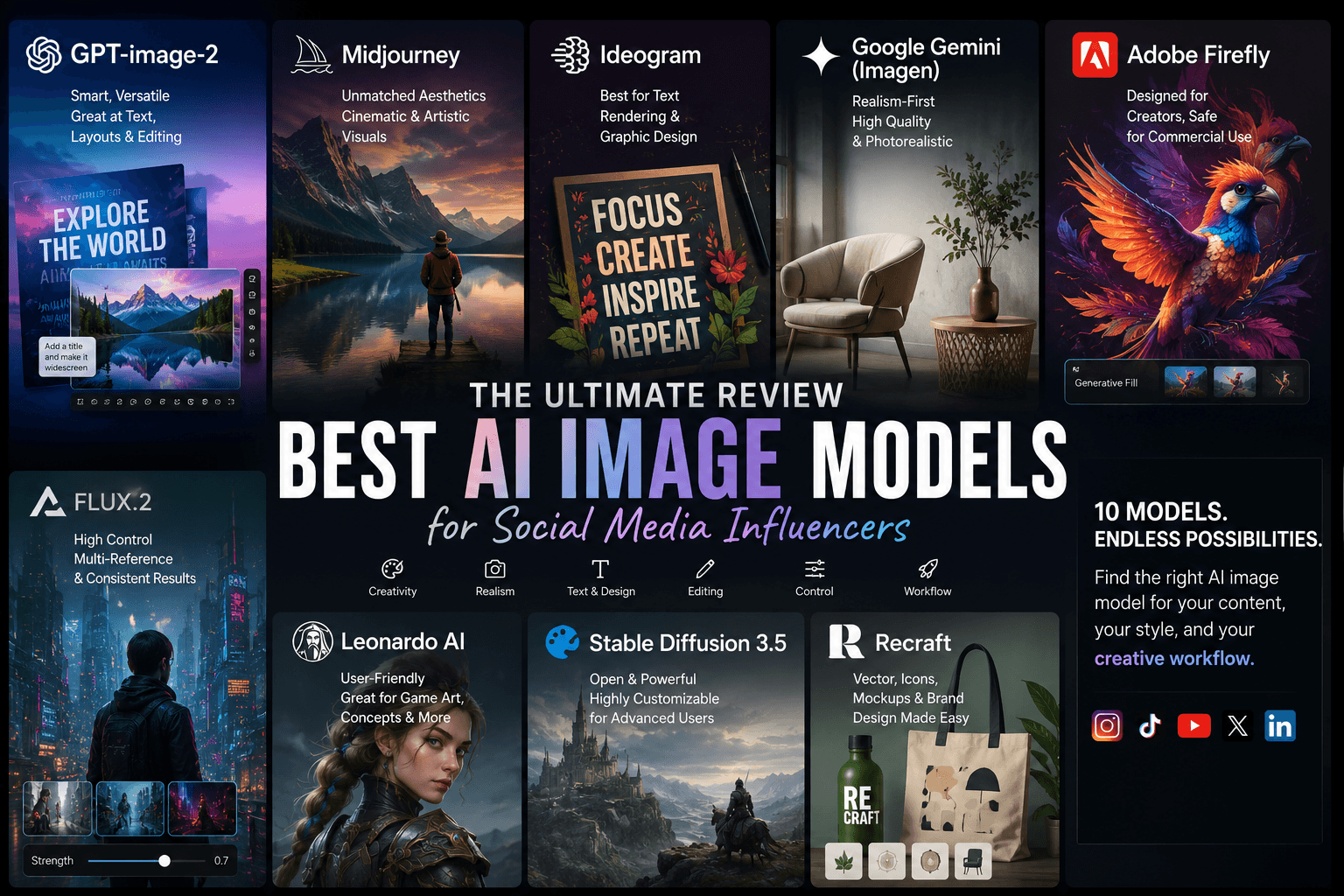

The real story is no longer which model makes the prettiest image. Instead, it is which combination of models helps creators move faster, keep their visual identity consistent, and ship content that feels ready for social platforms. Adobe’s Firefly direction now includes partner models such as OpenAI, Google, Ideogram, Luma, Pika, and Runway; OpenAI says the new ChatGPT Images model supports more precise edits and generates up to 4x faster; Google’s Gemini image docs support up to 14 reference images; and Black Forest Labs positions FLUX.2 around multi-reference editing and high-fidelity generation. Together, those moves point toward the same conclusion: the market is shifting from single-model loyalty to layered workflows.

Why one model is no longer enough

Creators do not just need “good images.” They need different tools for different tasks. A social post may require a strong concept, readable text, a clean layout, a consistent face, a brand-safe edit, and a fast final pass. That is why hybrid workflows make more sense than ever. OpenAI’s image model focuses on precise edits and faster generation, Ideogram emphasizes text rendering, Firefly leans into brand-safe production, and FLUX pushes multi-reference control. The strengths are real, but they live in different layers of the pipeline.

That shift also shows up in creator discussions. On r/OpenAI, bithatchling said GPT Image 2 handled “layout instructions” without the usual messy overlaps, and biopticstream said it could “actually output 16:9 images.” Those are small comments, but they reveal a big change in expectations: creators are starting to judge models by whether they fit real publishing needs, not just by whether they can make a pretty picture.

GPT-image-2: the assembly layer

OpenAI positions ChatGPT Images 2.0 as a model for more precise editing, consistent details, and faster generation. For creators, that matters because social content usually depends on composition, typography, and quick iteration rather than on one-off artistic surprise. In other words, GPT-image-2 feels less like a pure art generator and more like a content assembly tool.

The community feedback supports that reading. In the r/OpenAI thread, Alex__007 framed the launch as a major jump in complexity, while bithatchling praised the model’s ability to keep a technical diagram clean. biopticstream added that it can “actually output 16:9 images,” which makes it especially relevant for thumbnails, banners, and social layouts.

For influencer workflows, that means GPT-image-2 works best when the job requires structure. It can help draft carousel covers, ad creatives, presentation-style visuals, and any image where the final layout matters as much as the image itself. In a hybrid stack, it is often the model that ties everything together.

Midjourney: still the aesthetic benchmark

Midjourney still owns the part of the market that people describe as “aura.” It makes images feel cinematic, stylish, and emotionally rich. That is why fashion, beauty, lifestyle, and luxury-oriented creators still lean on it when they want mood and visual identity more than strict layout precision.

The comments are consistent. In r/midjourney, Potices wrote that the “quality is unmatched,” and that is exactly how many creators still talk about the model. It gives them atmosphere first and structure second. That makes it excellent for moodboards, hero visuals, and premium-looking concepts, but less convenient when a post needs clean text or exact formatting.

That tradeoff is exactly why hybrid workflows emerged. Midjourney can set the tone, but another model often has to handle the text or the final layout. When creators split those jobs, they usually move faster and get cleaner results.

Ideogram: the typography specialist

Ideogram’s own documentation makes its role very clear. The model excels at rendering text in images, especially for posters, logos, titles, labels, headers, and designs that combine visuals with words. That makes it one of the most practical tools for social media creators who need text to look intentional rather than broken.

The user feedback is just as direct. In r/ideogramai, sanquility said, “I use ideogram way more,” and added that it handles text more consistently than Midjourney. In the same thread, TheEfbel said the model renders quickly enough for regular use. That combination of readable text and practical speed explains why Ideogram keeps showing up in creator workflows.

So if Midjourney gives a post its mood, Ideogram gives it its message. For quote cards, title slides, promo graphics, and thumbnail text, that difference matters a lot.

Adobe Firefly: the brand-safe handoff layer

Adobe is taking a very different route. Instead of chasing a single artistic identity, Firefly now pushes a broader creative platform story. Adobe says Firefly can generate and edit images, audio, video, and designs, and Reuters reported that the company has added partner models from OpenAI and Google while also planning support for Ideogram, Luma AI, Pika, and Runway.

That makes Firefly useful in a different way. It becomes the safe, commercial, production-friendly layer in the workflow. It is not always the most exciting generator, but it can help creators and teams stay inside Adobe’s editing ecosystem and handle revisions more smoothly.

The community, however, stays skeptical about output quality. In r/OpenAI, geronimojito said Firefly’s quality “lags behind Gemini and Midjourney honestly,” and headhunter2637 said a simple logo looked “horrible every time.” Those reactions show the split clearly: Firefly attracts users with workflow trust, but many creators still prefer stronger output elsewhere.

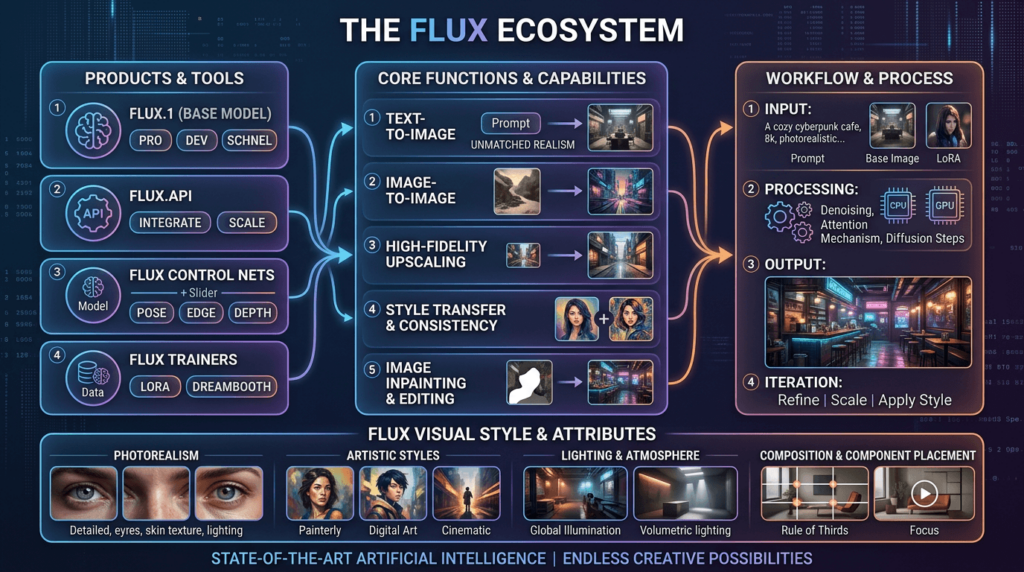

FLUX: the control-first option

Black Forest Labs positions FLUX.2 around text-to-image generation with multi-reference editing, color control, and high-fidelity results. That makes it especially useful when creators want more than a single prompt result. They want control, iteration, and the ability to work from references.

The community discussion reflects that balance between praise and caution. In r/StableDiffusion, Far_Celery1041 said FLUX’s prompt comprehension is “quite good,” while centrist-alex called it “generic and limited despite the cool text ability and prompt adherence.” That tension says a lot about the model’s role. People respect its control, but they still see it as one tool in a broader workflow, not the whole workflow itself.

For advanced creators, that is a feature, not a flaw. FLUX makes the most sense when a creator wants precise edits, reference-based generation, or a model that can feed into a more technical production stack.

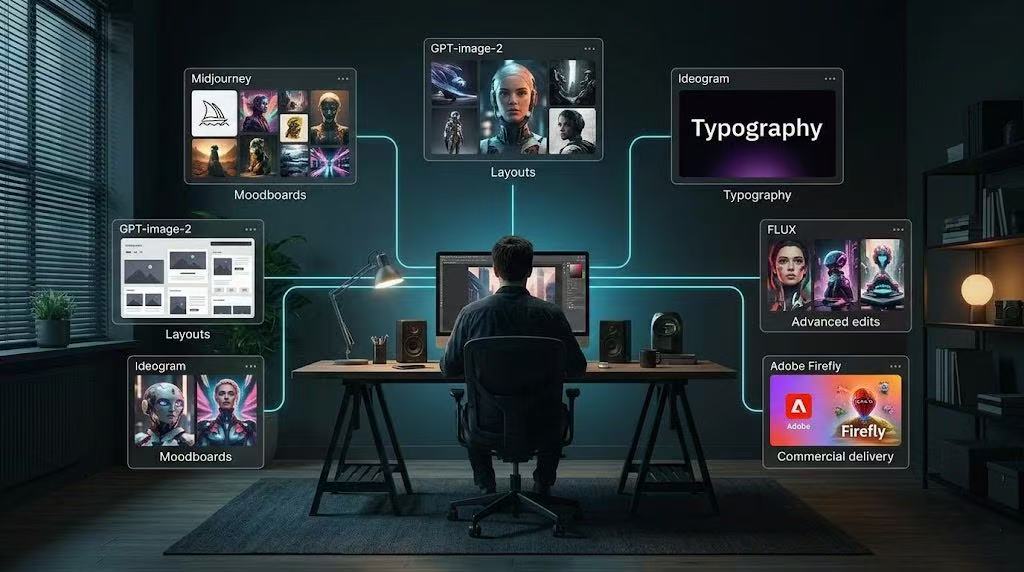

What hybrid workflows look like in practice

The strongest creative systems in 2026 will probably look less like one big model and more like a stack.

A creator might use Midjourney to explore mood, GPT-image-2 to assemble a structured layout, Ideogram to fix typography, Firefly to refine the piece for a commercial client, and FLUX to push reference control and consistency when the project gets more complex. Google’s Gemini docs also make a strong case for its place in this stack because they support up to 14 reference images, which fits the logic of multi-step visual editing very well.

Final takeaway

The biggest trend in 2026 is not a single model release. It is the collapse of the old idea that one model should do everything.

The new creator stack works because each model does one job well. Midjourney brings mood. Ideogram brings text. GPT-image-2 brings structure and editing. Firefly brings brand-safe workflow integration. FLUX brings control and multi-reference refinement. Gemini adds a strong reference-driven layer for iterative visual work. That conclusion comes from the official feature sets and from the way creators describe these tools in public threads.

So the real question in 2026 is no longer, “Which AI image model wins?”

It is, “Which workflow helps you create faster, cleaner, and more consistently?”

Go to WeShop AI For Exploration: