The $500 Billion “Guessing Game” is Over

Imagine this: It’s 11 PM on a Tuesday. A potential customer is scrolling through their social media feed and spots your brand’s newly launched spring trench coat. It looks breathtaking on the 5’11” runway model featured in your campaign. The lighting is cinematic. The styling is impeccable.

They click “Add to Cart,” thrilled about their purchase. But three days later, the package arrives, and the excitement instantly evaporates. The coat doesn’t drape the same way on their 5’3″ frame. The olive-green color washes out their skin tone. Within 24 hours, the item is back in a box, headed for your returns department.

We’ve all been there. If you run a fashion e-commerce brand, you know this story all too well.

In the traditional model, e-commerce is essentially a $500 billion guessing game. Customers “guess” if the fabric will stretch; they “guess” if the silhouette flatters their unique body type. When they guess wrong, your profit margins take the hit. Currently, fashion e-commerce is plagued by an average return rate hovering between 30% and 50%.

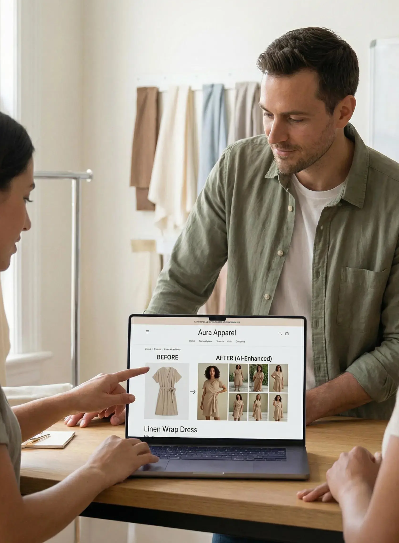

For decades, the industry’s solution was simply to throw more money at the problem: book more expensive studios, hire more diverse models, and shoot hundreds of extra photos. But in 2026, the era of the “representative model” is dying. The era of the “personalized digital render” has arrived.

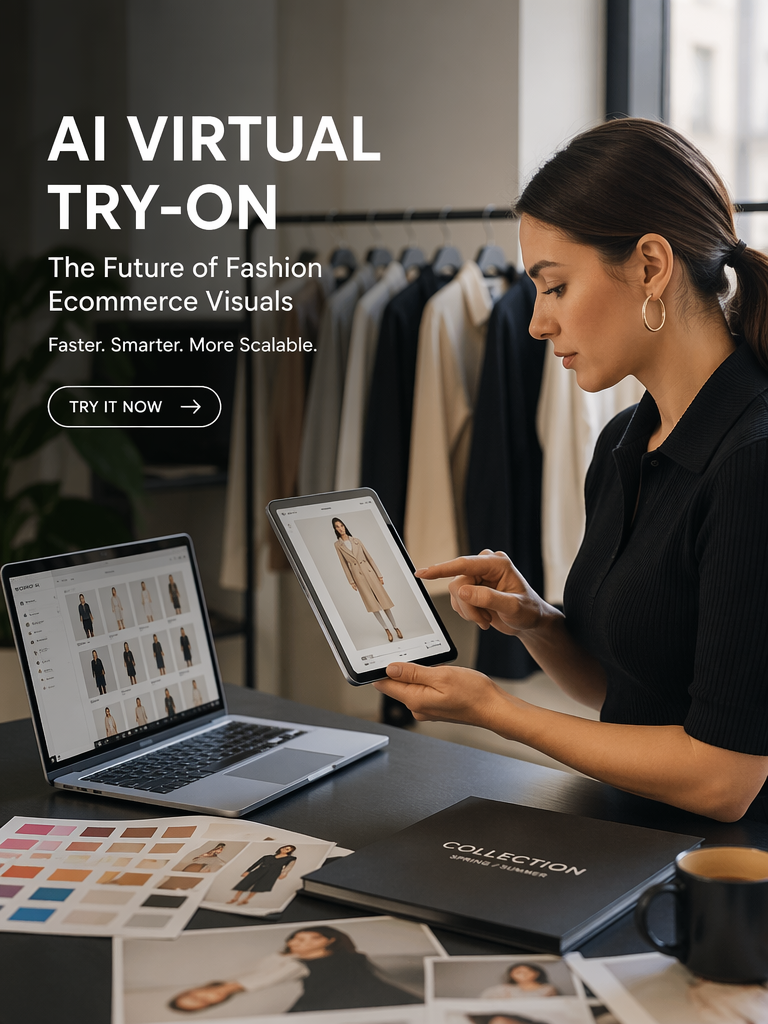

Here is the deep dive into the technology, the unit economics, and the undeniable reality of why AI-powered Virtual Try-On (VTON) is the new industry gold standard—and why the professional photoshoot is officially on life support.

The Technical Leap: From Digital Stickers to Physics Engines

To understand why 2026 is the tipping point, we have to look at the “Uncanny Valley” of fashion AI. For years, Virtual Try-On felt like a cheap digital sticker. Today, it operates like a high-fidelity mirror.

The Evolution of the “Fit”

The industry has moved through three distinct, painful eras of trial and error.

- The Dark Ages (GAN-based): Early Generative Adversarial Networks were essentially digital “warping” tools. They tried to stretch a 2D image of a shirt over a human body. The results were notoriously bad. Hands would disappear, complex patterns like plaid would turn into chaotic zig-zags, and the clothes looked like stiff cardboard.

- The Renaissance (Standard Diffusion): Models like early Stable Diffusion brought beautiful aesthetics, but they lacked “garment fidelity.” The AI would get creative—it would change the style of the buttons, alter the hemline, or completely hallucinate a new fabric texture. It looked great, but it wasn’t your product anymore.

- The 2026 Paradigm (Parallel Processing): We have now entered the era of High-Fidelity Physics Models, led by advanced architectures like nanobanana pro. These systems utilize a “Dual-UNet” approach. One neural brain specifically memorizes the rigid geometry of the garment (logos, seams, sheer fabrics), while the second brain analyzes the human’s pose, lighting, and depth. They don’t just “paint” clothes; they simulate how a specific fabric behaves in the real world.

Comparison: The VTON Tech Stack Evolution

| Feature | Old-Gen (GANs) | Standard Diffusion | nanobanana pro (2026 Standard) |

| Fabric Physics | Ignored. Flat and rigid. | Aesthetic, but gravity feels “off.” | Real-time drape, weight & flow simulation. |

| Complex Poses | Fails. Limbs often merge with clothes. | Decent, but struggles with occlusion. | Full pose-awareness (e.g., hands in pockets). |

| Pattern Integrity | Stripes and logos distort severely. | Patterns often “morph” into new designs. | Zero-shot identity & logo preservation. |

| Lighting | Flat and artificially bright. | Artistic, but doesn’t match the user. | Global Illumination (Environment matching). |

| User Experience | Required manual 3D mapping by devs. | Required prompt engineering. | Single-button “One-Click” API integration. |

Beyond the Product Page: The UGC & Influencer Revolution

While most people focus on how VTON changes the product page, its biggest hidden superpower in 2026 is how it transforms your marketing funnel—specifically User-Generated Content (UGC) and influencer outreach.

Historically, influencer marketing is a logistical nightmare. You have to email 100 micro-influencers, ask for their sizes, ship out 100 physical packages, hope the items fit perfectly, and pray the postal service doesn’t lose them. By the time the content is posted, the trend might be over.

With zero-shot models like nanobanana pro, brands are flipping the script.

You can now ask an influencer to simply submit a great photo of themselves in their favorite location. In seconds, you can generate hyper-realistic, high-resolution images of them wearing your entire new collection.

- Speed to Market: Launch an influencer campaign globally in 24 hours, without shipping a single box.

- Endless Variations: See how your flagship jacket looks on influencers walking the streets of Paris, lounging in Tokyo, or grabbing coffee in New York—all generated from one flat-lay product image.

The Unit Economics: ROI That Studio Shoots Can’t Match

Let’s talk numbers, because that’s what CMOs and brand founders actually care about. The Cost Per Asset (CPA) of traditional photography is no longer justifiable in a fast-fashion, TikTok-driven world.

The True Cost of the “Old Way”

A mid-tier editorial shoot is a financial black hole.

- Studio, Model & Crew: $5,000+ per day.

- Post-Production: $30 – $50 per image.

- Hidden Costs: The cost of shipping samples, the 3-week delay in time-to-market, and the rigid inability to change the photos once the shoot is wrapped.

The Generative Economics

When utilizing a highly optimized VTON infrastructure:

- Cost Per Render: Literally fractions of a cent.

- Post-Production: Instantaneous.

- Time to Market: Real-time.

However, the real ROI isn’t just in saving money on photographers; it’s in saving the sale. By shifting to a generative model, brands are seeing up to a 25% increase in Conversion Rates (CVR). When a customer sees themselves in the garment, the psychological phenomenon of “endowment effect” takes over—they begin to feel ownership of the item before they even reach the checkout page.

The “One-Click” Philosophy: Why Simplicity is the Ultimate Moat

The biggest mistake early AI developers made was assuming e-commerce merchants wanted to become software engineers. They built incredibly complex dashboards with endless sliders and technical jargon.

In 2026, the winner isn’t the platform with the most complex UI; it’s the platform with the simplest button.

This is why the architecture behind nanobanana pro is becoming the benchmark. It operates as a Black Box of Perfection. It removes the friction from both sides of the transaction:

- For the Merchant: You don’t need a 3D designer. You just upload a standard e-commerce flat-lay photo of your product.

- For the Customer: They click one button on their phone. The AI instantly handles the pose estimation, the fabric warping, the depth mapping, and the shadow matching in the background.

Friction is the ultimate enemy of conversion. By condensing billions of high-level physics calculations into a simple “Try It On” button, VTON has graduated from a “fun widget” to a core revenue engine.

The Sustainability Angle: The Greenest Shoot is the One You Don’t Take

We cannot ignore the environmental reality of the fashion industry. It is one of the world’s top polluters, and the traditional content creation pipeline contributes heavily to this. Flying production teams across the globe and rushing physical samples via air freight creates a massive carbon footprint.

Furthermore, every returned item requires re-shipping, re-packaging, and often ends up in a landfill if it cannot be restocked quickly.

Virtual Try-On is a Net-Zero content solution.

- Zero Samples Shipped: You can generate an entire season’s worth of marketing assets before the physical garments have even left the manufacturing floor.

- Slashing the Logistics Loop: By aggressively driving down return rates through accurate, personalized fit previews, models like nanobanana pro directly cut down the carbon emissions associated with reverse logistics.

Conclusion: Adapt or Be Left Behind

The year 2026 marks the definitive end of the “Post-Production” era and the absolute beginning of the “Generative” era.

The professional photoshoot isn’t dying because we hate the art of photography. It’s dying because it simply lacks the scale, speed, and hyper-personalization required to survive in the modern digital economy. In a market where consumer attention spans are measured in milliseconds, the ability to give every single shopper a personalized, photorealistic fitting room experience is the ultimate competitive moat.

Whether you are an independent boutique owner on Shopify or a global fast-fashion enterprise, the message is clear: The camera is no longer the most important tool in fashion. The digital model—and the AI that seamlessly powers it—is.