AI models are no longer competing on a single leaderboard. They are gradually splitting into different kinds of labor.

OpenAI’s ecosystem is increasingly optimized around broad reasoning, multimodal workflows, and general-purpose execution. Anthropic is pushing toward structured writing, long-form thinking, and agentic work. Google is turning Gemini into a “thinking-first” system built for synthesis and long-context analysis. xAI is building around real-time internet behavior and live platform interaction. Meanwhile, Qwen, DeepSeek, and Mistral are becoming increasingly important for multilingual workflows, deployment flexibility, and cost-efficient infrastructure.

That is why choosing an AI model based on benchmarks alone has become less useful than choosing based on workflow compatibility.

The better question is no longer:

Which model is smartest?

It is:

Which model behaves most like the teammate you actually need?

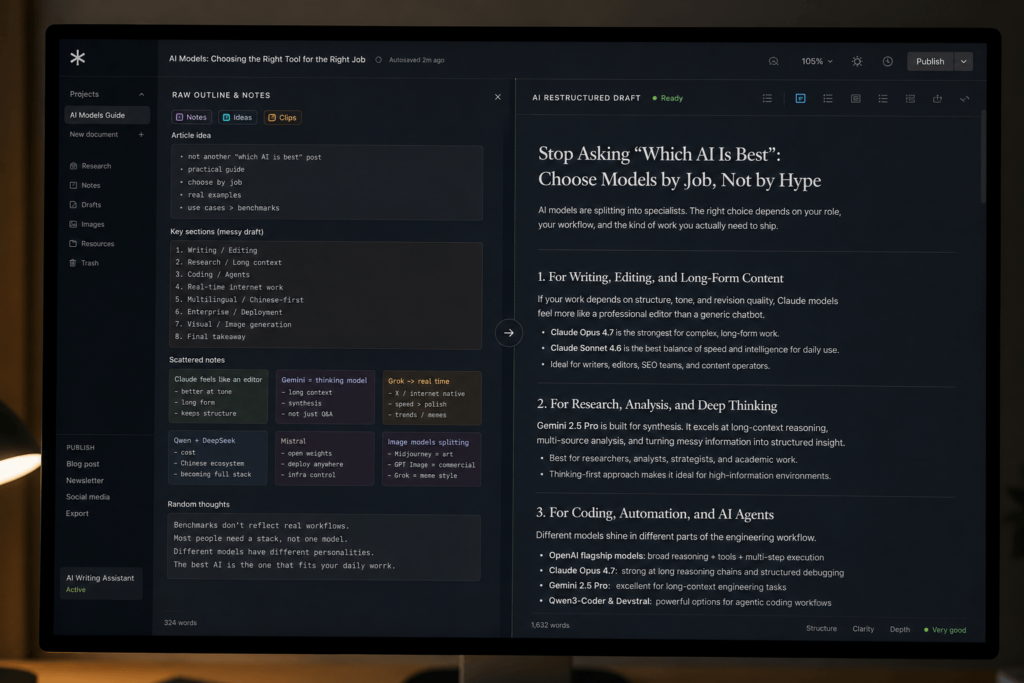

For writing, editing, and long-form content

Claude is becoming the “editorial AI”

Best for: writers, editors, SEO teams, newsletter operators

If your work depends on structure, tone, pacing, and revision quality, Claude currently feels closer to a professional editorial collaborator than most competing models.

Anthropic positions Claude Opus 4.7 as its highest-capability model, while Claude Sonnet 4.6 is designed as the balance point between speed and intelligence. In practice, Sonnet is increasingly becoming the everyday writing model for many content teams because it handles rewriting and structural refinement unusually well.

Most AI systems can generate paragraphs.

Far fewer can maintain rhythm across an entire article.

That distinction matters much more in real publishing environments than benchmark scores.

Claude models tend to preserve voice consistency better during iterative editing. They are especially strong when the task is not “generate text,” but:

- reorganize arguments

- tighten logic

- smooth transitions

- preserve tone across revisions

- turn rough drafts into publishable work

This is why many writers increasingly use Claude less like a chatbot and more like an editorial layer sitting between raw ideas and final publication.

OpenAI models are stronger when the workflow becomes broader

Best for: product managers, generalist operators, mixed-media workflows

OpenAI’s flagship models increasingly feel less specialized and more infrastructural.

They are not necessarily the most “literary” models, but they are extremely capable at moving across different kinds of tasks:

- reasoning

- image interpretation

- multimodal workflows

- coding

- tool usage

- structured execution

That flexibility matters for people whose work constantly shifts contexts.

A product manager may move from strategy notes to spreadsheet analysis, then into wireframe discussions, then into writing internal documentation — all within the same session. OpenAI’s ecosystem is particularly strong in those mixed environments because the model stack is designed around general execution rather than a single specialty.

The result is a system that increasingly behaves like a universal operational assistant rather than a pure writing tool.

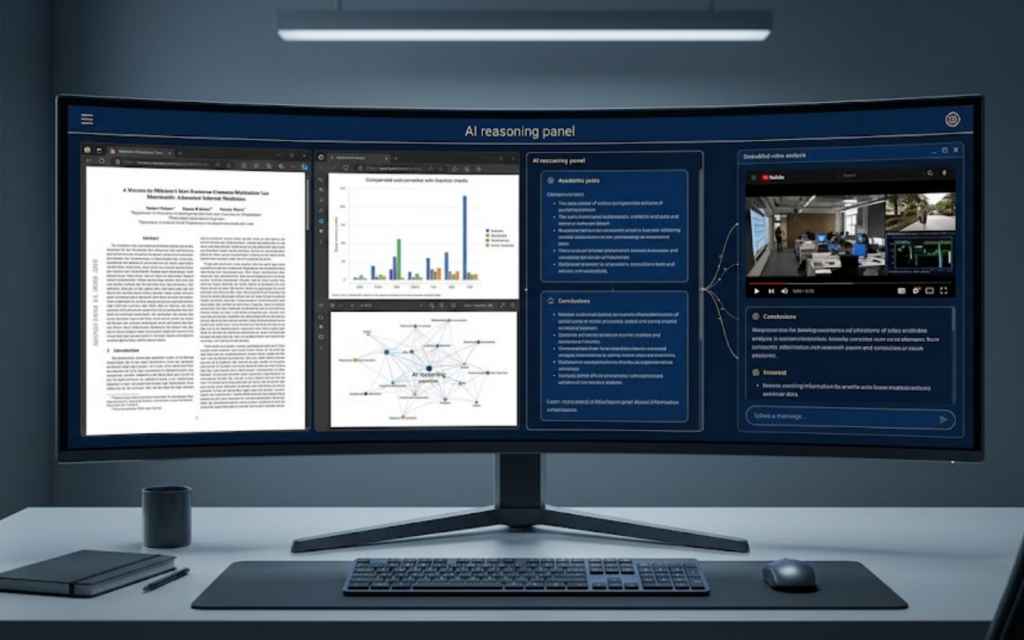

For deep research and long-context reasonin

Gemini is evolving into a synthesis engine

Best for: researchers, analysts, strategists, academic users

Google’s Gemini 2.5 Pro is one of the clearest examples of the industry shifting toward “thinking-first” systems.

Instead of optimizing purely for conversational flow, Gemini increasingly feels optimized for synthesis:

- comparing large information spaces

- reasoning across multiple sources

- preserving context over long sessions

- connecting fragmented information into structured conclusions

Its advantage becomes clearer as the information space expands.

For people working in market research, product strategy, academic synthesis, or other forms of high-density knowledge work, Gemini increasingly feels less like a chatbot and more like a reasoning layer sitting on top of large information systems.

This becomes especially important when the task is not answering a question quickly, but maintaining coherence across dozens of moving parts.

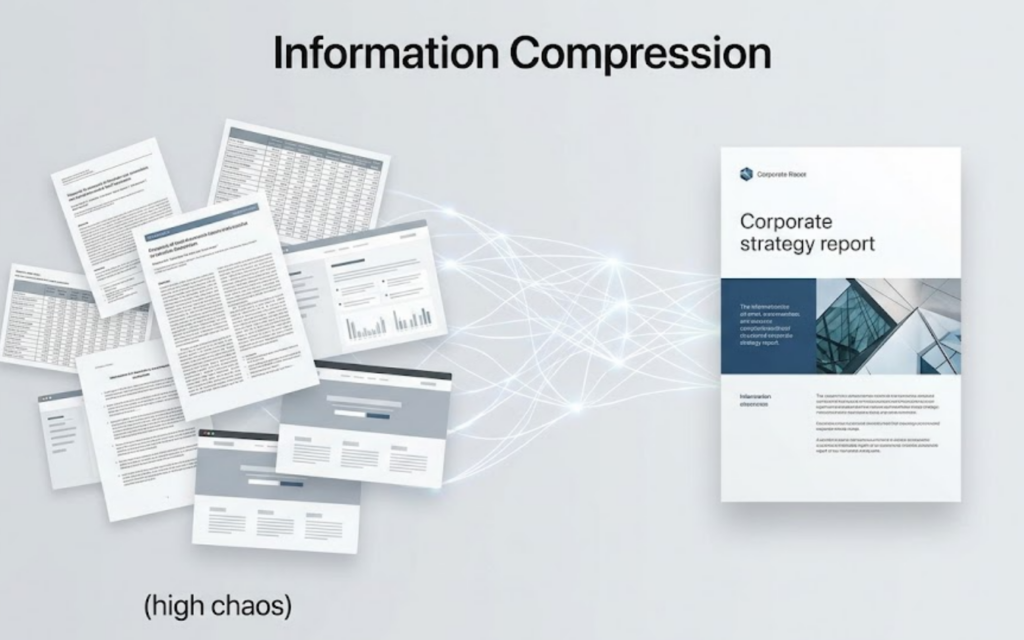

Why “thinking-first” models matter more now

A large part of AI usage is moving away from generation and toward organization.

The bottleneck for many professionals is no longer producing words. It is processing overwhelming amounts of information.

That shift changes what “good AI” means.

The most valuable models are increasingly the ones that can:

- compress complexity

- preserve structure

- identify relationships

- maintain logical continuity across scale

In that environment, reasoning quality becomes more important than stylistic flair.

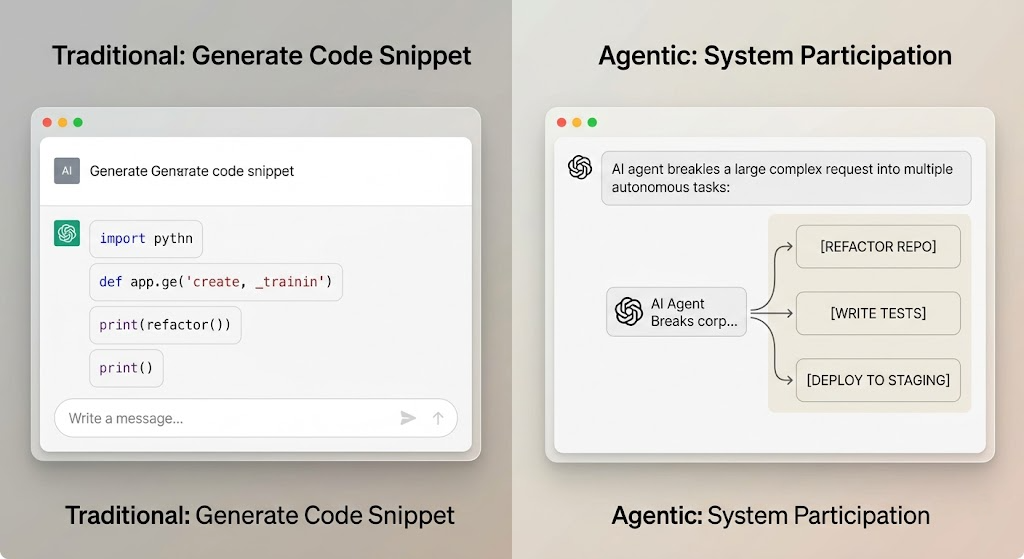

For coding, automation, and AI agents

Coding models are diverging into different specialties

Best for: developers, technical founders, engineering teams

The coding ecosystem is no longer dominated by a single “best programming model.”

Instead, models are separating into different engineering philosophies.

OpenAI’s flagship models emphasize broad reasoning and tool integration. Claude Opus performs especially well in long reasoning chains and structured debugging. Gemini is becoming increasingly strong in large-context engineering workflows. Qwen3-Coder and Devstral are pushing aggressively toward agentic coding and infrastructure-oriented execution.

These systems are no longer competing only on code quality.

They are competing on workflow behavior.

Some are better at:

- repository-level understanding

- autonomous task execution

- debugging continuity

- long-context architecture work

- infrastructure orchestration

The difference matters because modern software work increasingly depends on coordination rather than isolated snippets.

![0542f49447e4ec1ba176c03cf2560dc7 – WeShop AI Blog A professional engineering terminal environment with a dark background. Multiple suspended code windows (editor, terminal, debugger) surround a central, stylized AI agent interface that is actively executing a task chain: [PLAN] > [EXECUTE] > [DEBUG] > [DEPLOY].](https://www.weshop.ai/blog/wp-content/uploads/2026/05/0542f49447e4ec1ba176c03cf2560dc7.png)

Qwen and Devstral are becoming important for operational coding

Many Western discussions still frame open ecosystems mainly around cost.

That framing is becoming outdated.

Models like Qwen3-Coder and Devstral are increasingly interesting because they are designed around operational workflows rather than isolated prompting.

The goal is no longer:

“Generate code.”

It is:

“Participate inside software systems.”

That distinction becomes critical for teams building internal agents, automated pipelines, or AI-assisted engineering infrastructure.

For real-time internet workflow

Grok is optimized for internet velocity, not editorial polish

Best for: social media teams, PR operators, trend-focused brands

Many people misunderstand Grok because they compare it directly against writing-focused models.

That is not really the category xAI appears to be targeting.

Grok’s strongest positioning is speed inside live information ecosystems:

- real-time web interaction

- trend awareness

- social-platform-native behavior

- rapid contextual response

- internet-style content generation

It behaves less like an editorial assistant and more like an AI system trained for internet participation.

That distinction matters for teams operating in environments where timing matters more than polish.

For social-first brands, online communities, meme-driven marketing teams, and fast-response communication environments, Grok’s “internet-native” behavior can actually become more valuable than perfectly refined writing.

Grok may become more important as AI merges with platform culture

Most AI systems still behave like external tools.

Grok increasingly behaves like part of the platform itself.

That creates a fundamentally different trajectory.

As AI becomes more integrated with live ecosystems, internet-native models may become increasingly important for:

- trend amplification

- reactive media production

- meme ecosystems

- real-time brand voice generation

- audience-aware publishing

This is less about “better writing” and more about cultural responsiveness.

For multilingual and Chinese-first workflows

Qwen and DeepSeek are becoming full ecosystems, not alternatives

Best for: bilingual creators, cross-border teams, multilingual operators

Qwen’s ecosystem is increasingly important because it is not just a single chatbot.

It is becoming a vertically integrated AI stack:

- reasoning

- multilingual publishing

- coding

- translation

- agents

- deployment

That makes it especially practical for teams operating across languages and markets.

Meanwhile, DeepSeek’s rapid rise reflects another important shift:

cost-efficient reasoning is becoming strategically competitive.

DeepSeek is no longer interesting merely because it is cheaper.

It is interesting because it increasingly delivers strong reasoning performance while remaining integration-friendly and operationally flexible.

The broader trend is that Chinese AI ecosystems are no longer acting as secondary substitutes to Western systems.

They are becoming parallel infrastructures.

For enterprise deployment and infrastructure control

Mistral is positioning itself as infrastructure, not personality

Best for: enterprise IT, platform teams, deployment-sensitive organizations

Mistral occupies a very different part of the market compared to consumer-facing AI products.

Its positioning is increasingly centered around:

- open-weight deployment

- infrastructure flexibility

- enterprise customization

- local execution

- controllable AI systems

That matters for organizations where deployment structure matters as much as model quality.

For heavily regulated industries, enterprise software environments, or internal AI platforms, the question is often not:

“Which model sounds smartest?”

It is:

“Which model can realistically fit inside our infrastructure?”

That is where Mistral becomes strategically relevant.

For visual work and AI-generated design

Image models are splitting into different creative economies

Best for: designers, brand teams, visual creators

The image generation market is no longer moving toward one universal aesthetic.

It is fragmenting into different visual economies.

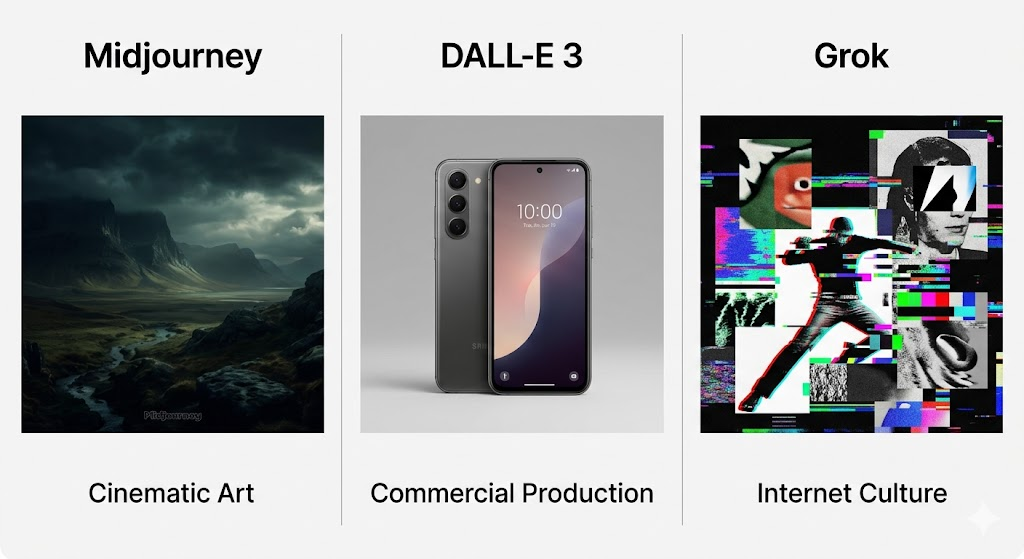

Midjourney increasingly dominates cinematic and atmospheric imagery. GPT Image 2 is becoming more associated with commercial visual production and controllable design workflows. Grok’s visual identity leans toward internet-native aesthetics and meme culture.

These systems are no longer competing for the exact same users.

They are competing for entirely different creative environments.

The most important shift is that image models are increasingly evaluated not by beauty alone, but by usability:

- can they follow layout instructions?

- can they preserve consistency?

- can they produce campaign-ready assets?

- can they integrate into professional workflows?

That is why GPT Image 2 is becoming more important in commercial design contexts.

The future of image AI is becoming workflow-oriented

Earlier image models were optimized around spectacle.

Newer systems are increasingly optimized around production.

That difference changes the entire industry.

The market is moving from:

“Generate beautiful images”

to:

“Generate usable visual infrastructure.”

This shift favors models that understand:

- branding systems

- layout logic

- commercial consistency

- multi-asset campaigns

- iterative editing workflows

The future winners may not be the models that create the most artistic outputs.

They may be the models that integrate most smoothly into real creative pipelines.

Final takeaway

The AI market is no longer organized around a single definition of intelligence.

It is increasingly organized around workflow compatibility.

Claude is becoming the editorial specialist.

Gemini is becoming the synthesis engine.

OpenAI is becoming the general operational layer.

Grok is becoming the internet-native responder.

Qwen and DeepSeek are becoming multilingual production ecosystems.

Mistral is becoming deployment infrastructure.

The most effective AI users are no longer the ones searching for a universal model.

They are the ones building the right stack for the right kind of work.

| Model / family | Main strength | Best for |

|---|---|---|

| Claude Opus / Sonnet | Writing, editing, structured thinking | Writers, editors, content teams |

| Gemini 2.5 Pro | Long-context reasoning, synthesis | Researchers, analysts, strategists |

| OpenAI flagship models | General reasoning + multimodal workflows | Product managers, generalist operators |

| Grok 4.1 Fast | Real-time internet response | Social media, PR, trend teams |

| Qwen ecosystem | Chinese + multilingual workflows | Cross-border teams, bilingual creators |

| DeepSeek V4 | Efficient reasoning + integration flexibility | Startups, technical teams |

| Mistral Large / Devstral | Deployment and infrastructure control | Enterprise, platform teams |

| GPT Image 2 | Commercial visual generation | Designers, brand teams |

Go to WeShop AI For Exploration: