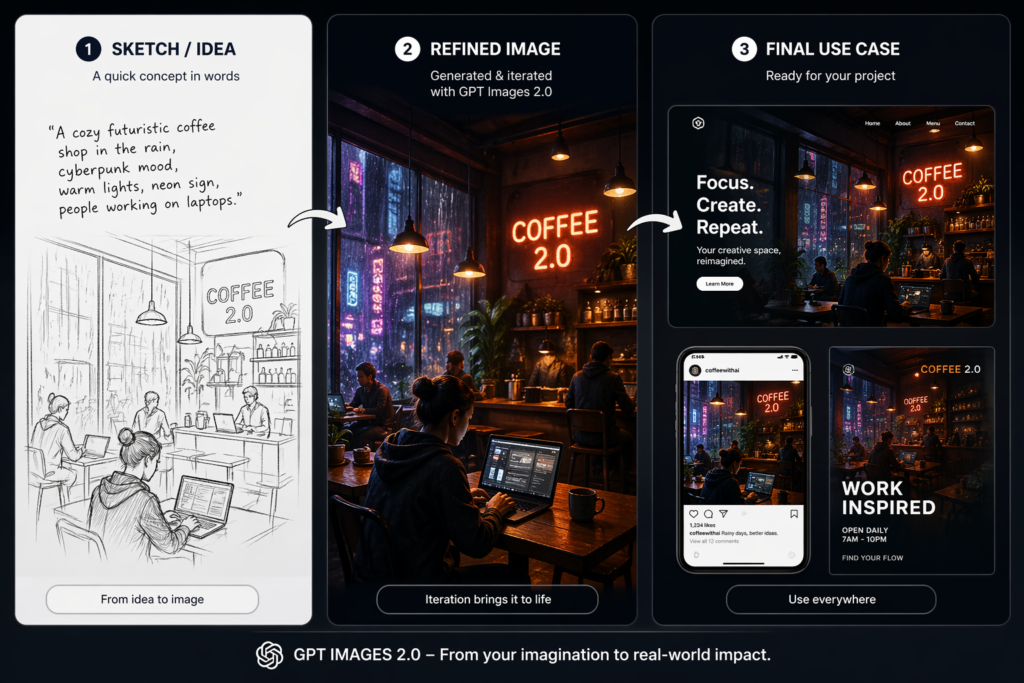

The easiest way to understand gpt-image-2 is to stop asking whether it can make pretty pictures and start asking whether it can survive real work. OpenAI positions the model as a fast, high-quality image generation and editing system with flexible sizes and high-fidelity image inputs, which already tells you that the intended use case is broader than novelty art. It is meant for production-like workflows, not just one-off experiments.

That is why the strongest public reactions are not just “wow” reactions. They are practical reactions. People are testing whether the model can handle UI mockups, dense compositions, text-heavy layouts, and repeated refinements without losing its shape. In 2026, that is the real test. A model can be visually strong and still be awkward to live with. That tension is what makes gpt-image-2 interesting.

What users are actually praising

On r/ChatGPTPro, peakpirate007 wrote that the “character consistency + text rendering upgrades are wild,” and that the model is especially useful once it is tuned into a workflow instead of being left as a default generic interface. The same post describes using the model for product photography, an infographic, an iOS meditation app UI mockup, and a cyberpunk editorial illustration in one session. That is a very strong signal: users are not treating the model as a toy; they are testing it as a multi-purpose production layer.

On r/LocalLLM, TroyNoah6677 argued that GPT Image 2 “killed the yellow filter” and made everyday scenes feel like usable tools rather than sterile AI art. That post is important because it points to a change in the market: not just prettier output, but more believable mundane scenes, which is exactly where a lot of content teams actually live. If an image model can finally make ordinary scenes feel ordinary, that is a meaningful shift.

On X, the official OpenAI Developers account posted that gpt-image-2 is available in the API and Codex and described it as a production-grade workflow model. That aligns neatly with the way users are talking about it in public: not as a curiosity, but as a tool for actual shipping work.

Where the praise stops and the criticism starts

Reddit’s design crowd is far less forgiving

If you want the bluntest criticism, r/graphic_design is the right place to look. In a thread about gpt-image-2 and graphic design, baldierot said language is an imprecise medium for achieving precise results, and added that these generators cannot follow precise technical instructions properly; the comment ends with the judgment that the experience is “terrible for graphic design specifically.” That is a harsh verdict, but it is also useful because it comes from a design-first perspective, not a hype-first one.

That criticism is not about whether the model is good overall. It is about where the model breaks down. Designers need exact spacing, exact hierarchy, and exact control. If the model misreads a technical layout request, the inconvenience is not minor. It is the whole job. So while gpt-image-2 may be excellent for ideation and mixed workflows, it still faces a hard ceiling in precision-heavy design tasks.

The artifact problem is real

On r/ChatGPT, Hyro0o0 said they noticed a distinctive “noise pattern” that shows up after several refinement passes, and GuyNamedWhatever argued that GPT image outputs can get worse in shading and lighting, as if the system is layering effects over an already-complete image. The exact language is informal, but the complaint is clear: repeated passes can introduce a visible signature. That matters because iterative tools are supposed to improve with revision, not leave a detectable scar.

The same thread also contains users saying the image quality improves with simpler prompts, which is a useful clue. It suggests the model may be strongest when its task is constrained and less reliable when the creative demand becomes too layered. That is not rare in image generation, but it does mean reviewers should stop pretending the model is equally good at everything.

gpt-image-2 versus Imagen 4 is not a fair fight in every category

A more useful comparison is not with Midjourney, which often becomes a style-versus-style argument, but with Imagen 4 and Firefly, because those models expose different parts of the problem. Google says Imagen 4 has improved spelling and typography, supports a range of aspect ratios, and reaches up to 2K resolution. That makes it especially relevant for posters, presentations, comics, and other text-heavy assets.

That detail matters because gpt-image-2 is being judged in exactly those use cases now. If a model is asked to do design work, typography is not a side feature. It is part of the core job. So when users talk about gpt-image-2 as if it solved the whole image problem, they are skipping the question of whether it actually solves the exact layout problem they care about. In many cases, Imagen 4 still looks like the more specialized option for legible text and presentation-ready visuals.

Firefly gives teams a different kind of control

Adobe’s 2026 direction makes the comparison even sharper. Adobe’s creative trends report points toward generative AI turning creative sparks into tangible ideas, while the March 2026 Firefly update expands access to custom models trained on your own images. That is not a small product note. It means Adobe is leaning hard into reusable style systems, not just single-image generation.

So when a team asks what to use, the answer depends on the need. If the need is rapid exploration, gpt-image-2 makes sense. If the need is a reusable brand system, Firefly has a stronger story. If the need is highly readable poster or slide work, Imagen 4 has a cleaner edge.

The interesting part is that gpt-image-2 sits in the middle of these roles. That makes it the most flexible generalist, but it also means it is not the single best answer for every lane.

2026 is pushing image models toward usefulness, not spectacle

The broader trend is not just about better-looking images. It is about images that fit workflows. Adobe’s 2026 trend language keeps pointing toward choice, flexibility, and AI as a practical creative layer. The Verge’s coverage of the newer image system also highlights consistency, instruction following, and stronger text handling, which is exactly where the market is heading. The most valuable models are now the ones that make repeated production less painful.

That is why the public comments matter so much. They show the real split: some users are thrilled by consistency and text; others immediately run into artifacts, design limits, and workflow friction. Both reactions are true. And that is the best way to judge gpt-image-2 in 2026. It is useful enough to become part of a real workflow, but imperfect enough that specialists still have good reasons to switch tools.

Final verdict

gpt-image-2 is not the most glamorous image model, but it is one of the most strategically relevant ones right now. The praise is real. The criticism is also real. Users like its consistency, text handling, and production feel. They also report design precision gaps and refinement artifacts. That balance is exactly what makes it worth writing about honestly.

Go to WeShop AI For Exploration: