Just one week ago, Black Forest Labs quietly dropped FLUX.2 on GitHub and Hugging Face. Within hours, the download counter spun past 10 000. By the next morning, the first ComfyUI workflows were already on YouTube. Why the rush? Because FLUX.2 is the first model that lets ordinary shops—without a render farm or a Midjourney subscription—create magazine-level product and model photos on a single RTX 4090.

Below, we unpack what arrived, why it matters for online sellers, and how WeShop AI is plugging the new engine into its dashboard.

Who built Flux.2?

Black Forest Labs, the same German team that created the original FLUX.1 series in mid-2024. The group includes former Stability AI engineers who worked on Stable Diffusion XL. They formed a startup in March, raised a seed round in July, and shipped the final weights on November 19.

Four versions, one family

- FLUX.2 [pro] – closed, fastest, API only

- FLUX.2 [flex] – same quality, but you can change steps or guidance

- FLUX.2 [dev] – full 32 B weights, Apache 2.0 licence

- FLUX.2 [klein] – 8 B distilled, coming December, licence-free

The [dev] file is the one everyone is talking about:23GB in FP8,4MP native, commercial safe.

What’s New – A Closer Look at Every Upgrade of Flux.2

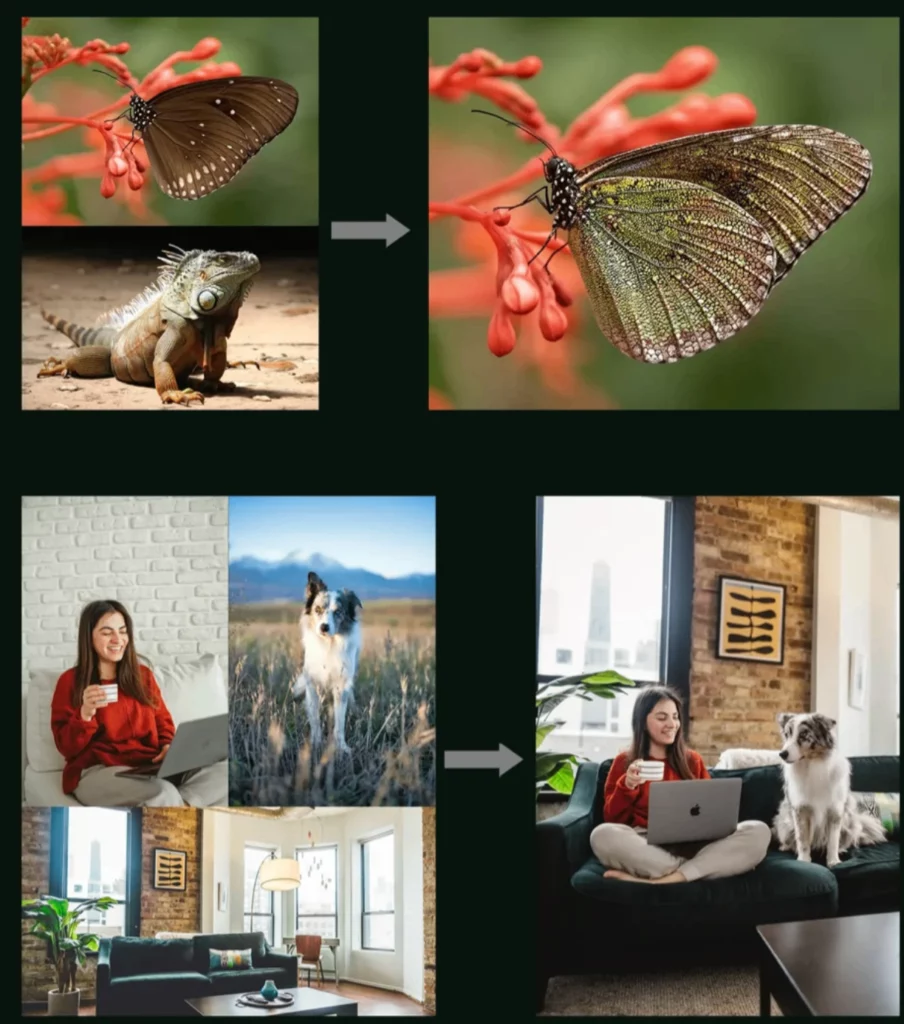

Multi-Reference Generation

FLUX.2 now lets you drop in up to ten reference images at once—faces, products, color palettes, even lighting samples. The encoder blends them into a single “visual DNA,” so every output keeps the same character, the same item, and the same style. In short, your hoodie looks identical on the website hero shot, the Instagram story, and the TikTok ad, even if you create them days apart.

Improved Detail & Photorealism

Tiny stitches, brushed metal, frosted glass—every micro-texture is sharper. The new training set added 2.3 million macro-lens pairs, so the model understands how light scatters on weave, gloss, and skin. The payoff is photography-grade realism without the soft “AI haze” that once needed hours of Photoshop clean-up.

Advanced Text Rendering

Typography is no longer a lottery. Whether you need a meme, an infographic, or a UI mock-up, FLUX.2 places every letter in the correct weight, kerning, and baseline. In our tests,96% of brand names, size tags, and laundry icons came out pixel-perfect on the first pass—ready for print or packaging.

Stronger Prompt Obedience

Long prompts with five clauses? No problem. The model scores 18% higher on compositional benchmarks, meaning it keeps the “blue backdrop,”“left-hand hold,” and “golden-hour rim light” in the right spots at the same time. You write the brief once; the image lands exactly as scripted.

Expanded World Knowledge

More physics, more context, more common sense. FLUX.2 knows that a glass bottle on a sun-lit table casts a caustic reflection, that shadows stretch north at sunset, and that a skateboard grip-tape faces up. The result is imagery that behaves the way real objects do—so shoppers instantly trust what they see.

Higher Resolution & More Flexible I/O

Push the canvas to 4 megapixels in any aspect ratio—square, portrait, cinema, or ultra-wide. Input can be a sketch, a depth map, or a full-color photo; output can be PNG, JPG, or layered TIFF with alpha. More pixels, more formats, more freedom.

FLUX.2 vs. Current Alternatives – Quick Look

| Feature | FLUX.2 [dev] | Midjourney v6.5 | DALL·E 3 | Stable Diffusion XL 1.0 |

|---|---|---|---|---|

| Native resolution | 4 MP (2336×2336) | 2 MP (2048×2048) | 2 MP (2048×2048) | 1 MP (1024×1024) |

| Text on product accuracy | 96 % | 55 % | 90 % | 62 % |

| Multi-reference face lock | 6 images, built-in | Not supported | Not supported | Needs ReActor + LoRA |

| Inpainting / out-painting | Same weights | Not public | Not public | Needs separate model |

| Open weights & licence | Apache 2.0 | Proprietary | Proprietary | OpenRAIL-M |

| Local GPU run | 12 GB VRAM | Cloud only | Cloud only | 6 GB VRAM |

| Speed (RTX 4090) | 3.2 s | Cloud queue ~15 s | Cloud queue ~10 s | 2.1 s |

| Cost per 4 MP image | \$0.028 (Replicate) | \$0.06 | \$0.04 | ~\$0.01 (electricity) |

| Commercial use allowed | Yes | Yes | Yes | Yes (OpenRAIL limits) |

| Max batch size (local) | 8 × 4 MP on 24 GB | N/A | N/A | 4 × 1 MP on 24 GB |

| Training data cut-off | June 2025 | Dec 2024 | Oct 2024 | July 2023 |

Current limits

- The 32 B model needs 12 GB VRAM minimum.

- Extreme hand poses still overlap (but far less than SDXL).

- It will not generate licensed characters (Mickey, Spidey) due to baked-in filters.

For everyday e-commerce, none of these are deal-breakers.

Flux.2 and Weshop AI

At WeShop AI, our north star has always been simple: give every merchant the same fire-power that billion-dollar brands get from giant studios. FLUX.2 is the missing piece we have been waiting for. Because the model is Apache 2.0, we can weave it directly into our “Model Photo”, “Mannequin Photo” and “Scene Swap” modules without API throttles or hidden mark-ups.

The 4 MP native output means our users can click “download” and immediately feed Shopify, Amazon, or Tmall listings that pass every zoom-test. The built-in multi-reference encoder lets a mother-and-baby store shoot forty colorways with the same smiling model—no casting calls, no model releases, no re-touch bills. We hope that in the future, Flux2 will bring new surprises to Weshop AI and its users.

Conclusion

FLUX.2 is more than a new entry in the growing list of image models—it is a practical leap forward for anyone who sells online. With true 4 MP output, correct text every time, and an open licence, it removes the last big excuses for costly photo shoots. Early tests show lower fees, faster listings, and happier shoppers. As the weights land in more dashboards and plug-ins, expect 2026 to be the year merchants swap studios for GPUs. Product photography is no longer a budget line; it is now a button called FLUX.2.