There is a strange truth about video: most of it is never watched all the way through.

A long webinar gets recorded and then buried in a shared drive. A customer interview is trimmed down to a few quotes. A product demo is searched for one promising moment, then scrubbed frame by frame until someone finally finds it. The value is in the clip, not the full recording. Yet the time cost is usually paid upfront, by a human, one drag on the timeline at a time.

That is the real opening for an AI video analyzer. Not to “understand video” in some abstract, futuristic sense, but to help people get to the right moment faster.

That small shift changes everything.

The problem is not too much video. It is too much searching.

People do not usually ask for an analyzer

People do not usually sit down and think, I need an AI video analyzer.

They think, I know this answer is in here somewhere.

The real pain is finding one second

It might be inside a 90-minute interview, a batch of training recordings, a sports highlight reel, a security archive, a classroom lecture, or a pile of user-generated clips. The challenge is almost never the same as the one video platforms advertise. The challenge is not merely storing video. It is finding the exact second that matters.

Video makes searching awkward

Text can be skimmed. Images can be scanned. Video demands time. Even with playback speed cranked up, the process still feels like rummaging through a drawer with one hand tied behind your back.

This is where modern AI tools have started to change the game. They do not just label a file. They turn moving images, sound, text on screen, and scene changes into something searchable. In practical terms, that means you can ask a question, spot a pattern, or jump straight to a relevant moment instead of scrubbing blindly.

What makes this useful is not magic. It is compression.

The best tools reduce the hunt

The most helpful AI systems do not try to replace human judgment. They compress the hunt.

A practical example from marketing

Think about a marketing team reviewing hours of customer testimonial footage. Nobody wants a tool that merely says, “This is a video of a person talking.” That is technically true and practically useless. What they want is something closer to this: the part where the customer mentions pricing, the moment where they describe a pain point, the sentence that sounds most quotable, the scene where the product finally makes sense.

Metadata is not the same as meaning

That is the difference between metadata and meaning.

A good analyzer helps with both. It can transcribe speech, detect objects, identify scenes, read on-screen text, and generate summaries. But the real value shows up when those pieces come together and answer a human question: where is the good stuff?

That is why the best products in this space increasingly lean toward search, summarization, and moment retrieval rather than flashy “AI can watch anything” claims. In practice, users care less about the spectacle and more about speed.

The best use cases are deeply ordinary

Content teams want clips, not archives

A content team wants to repurpose one recording into five clips. A journalist needs the strongest quote from an hour-long interview. A teacher wants to find the exact segment where a concept is explained clearly.

Operations teams want answers, not playback

A support team wants to review a customer call without replaying the entire thing. A legal or compliance team wants to locate a specific visual event quickly. A creator wants to pull the most shareable minute from a long livestream.

None of that sounds futuristic. All of it is painfully real.

The bar is higher than it looks

And because the problems are so ordinary, the bar is high. The tool has to fit into a messy workflow, not a polished demo. It has to be fast enough to save time, accurate enough to trust, and simple enough that a non-technical person can use it without a training session.

That is a big reason why this topic is ripe for a stronger editorial angle. The most compelling story is not that AI video analysis exists. It is that it quietly removes one of the most annoying parts of modern work: searching through recorded time.

A better way to explain the category

Start with friction, not definition

If you are writing about this topic, avoid starting with a dictionary-style definition. That makes the article feel flat before it has even begun.

Instead, start with the friction.

Open with the moment someone gets stuck

Open with the moment someone is stuck inside a video, trying to find one detail. Describe the wasted minutes, the repeated scrubbing, the growing frustration. Then introduce the AI layer as a way to shorten that search.

Why this works better

That approach does two things at once. First, it makes the topic instantly understandable for readers who are not technical. Second, it gives experienced readers a concrete reason to keep reading, because they already feel the pain.

In other words, do not lead with “what it is.” Lead with “why it matters.”

What readers actually want to know

Questions that build trust

Once the hook is in place, the rest of the article should answer the questions people really have.

Can it find the right moment reliably? Can it handle noisy audio, accents, multiple speakers, or fast cuts? Does it work better on meetings, social clips, archived footage, or live recordings? Does it help users search by words, visuals, or both? Is it fast enough to be useful in a real workflow? Does it save time, or does it just move the work somewhere else?

Be honest about limits

A strong article should also be honest about limits. AI video analysis is impressive, but it is not a mind reader. It can miss context. It can misread scenes. It can struggle when audio is poor or the visual content is ambiguous. That does not make the category weak. It makes the article believable.

Readers trust you more when you admit that the tool is powerful but not perfect.

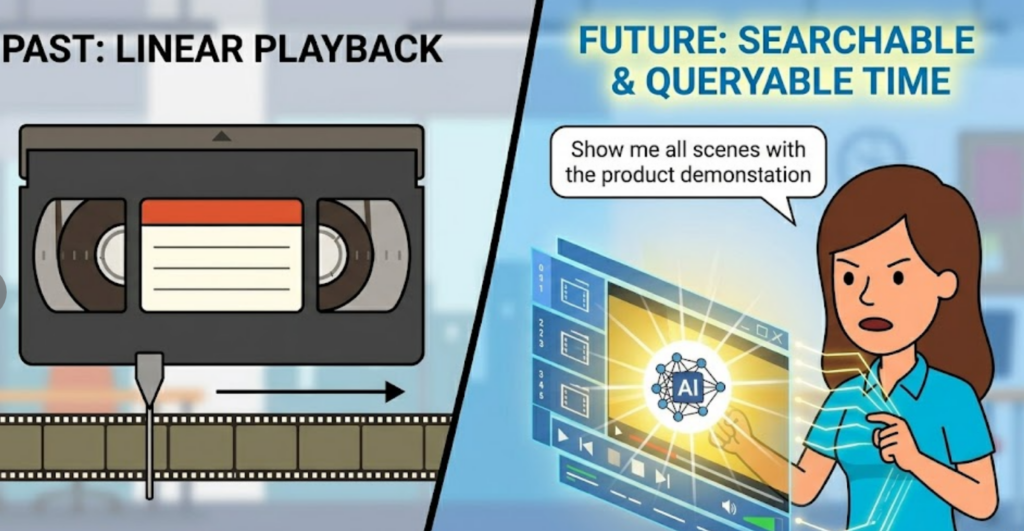

The most interesting shift is from playback to query

The old model was linear

For years, video software revolved around playback. You opened a file, pressed play, and moved through it manually.

The new model is searchable

AI changes the posture of the user.

Instead of asking, “How do I watch this faster?” people can ask, “How do I ask the video a question?” That is a more interesting product promise, and it opens up a much better article angle too.

You can search by topic. You can search by speaker. You can search by scene. You can search by keyword in transcript or text shown on screen. You can use the system to surface moments that would otherwise stay hidden.

Why this feels bigger than a tool

That is why the category feels bigger than a simple analyzer. It is edging toward a new kind of interface for recorded media: one where time becomes queryable.

Why this angle works for both insiders and outsiders

Experienced readers want nuance

If your readers already know the space, they will appreciate the nuance. They know the difference between basic transcription and real video understanding. They know that “AI” is not the product; the workflow is the product.

New readers want clarity

If your readers are newcomers, they will appreciate the clarity. They do not need a technical deep dive to understand why this matters. They just need to see the pain, the shortcut, and the payoff.

That is the sweet spot.

A good article in this category should feel like a smart person explaining something useful over coffee, not a product page trying too hard. It should move smoothly. It should sound human. It should make the reader think, yes, that is exactly the problem.

Conclusion

The real value is speed

The most compelling story about an AI video analyzer is not that it “analyzes video.” That sounds broad, vague, and a little cold.

The real story is narrower and far more useful: it helps people find the right moment without wasting the whole afternoon.

A better promise for the category

That is a much better hook for a blog post, a much better promise for a product, and a much better way to explain why this category matters now.

Because in the end, video is not just something we watch. It is something we search.

And the tool that wins is the one that makes the search feel effortless.

Go to WeShop AI For Exploration: