In most AI video launches, the pattern is already familiar: a teaser clip, a glossy landing page, a few polished demos, and then another tool disappears into the background. HappyHorse AI spread differently. It entered the conversation through benchmark chatter, creator comparisons, and a surprising amount of confusion about what was real, what was hype, and where people could actually try it. Alibaba’s public rollout says HappyHorse 1.0 is a 15B-parameter model built by the Future Life Lab inside Alibaba’s Taotian Group, with text, video, and audio processed in a single sequence; Alibaba Cloud also says it entered limited beta through the official website, Alibaba Cloud Model Studio, and Qwen App.

What Is HappyHorse AI, Really?

HappyHorse AI is best understood as a cinematic AI video model rather than a simple text-to-video toy. The official and partner descriptions emphasize multi-shot sequencing, audio-visual synchronization, instruction-following, and support for text-to-video, image-to-video, and subject-to-video workflows. In other words, the pitch is not just “it makes video,” but “it keeps the scene coherent while also generating sound in the same pass.” That is the feature set that made it stand out in 2026.

What makes that interesting is not the spec sheet alone, but the way the model has been framed in the market. Artificial Analysis currently lists HappyHorse-1.0 at the top of the text-to-video arena without audio and at the top of the image-to-video arena without audio, while it sits just behind Seedance 2.0 in the audio-enabled text-to-video and image-to-video rankings. That creates a useful nuance: HappyHorse is not just “good,” it is unusually visible across multiple benchmark modes, which is why people keep comparing it with Seedance, Kling, and Veo.

Why It Became a Conversation Instead of Just Another Tool

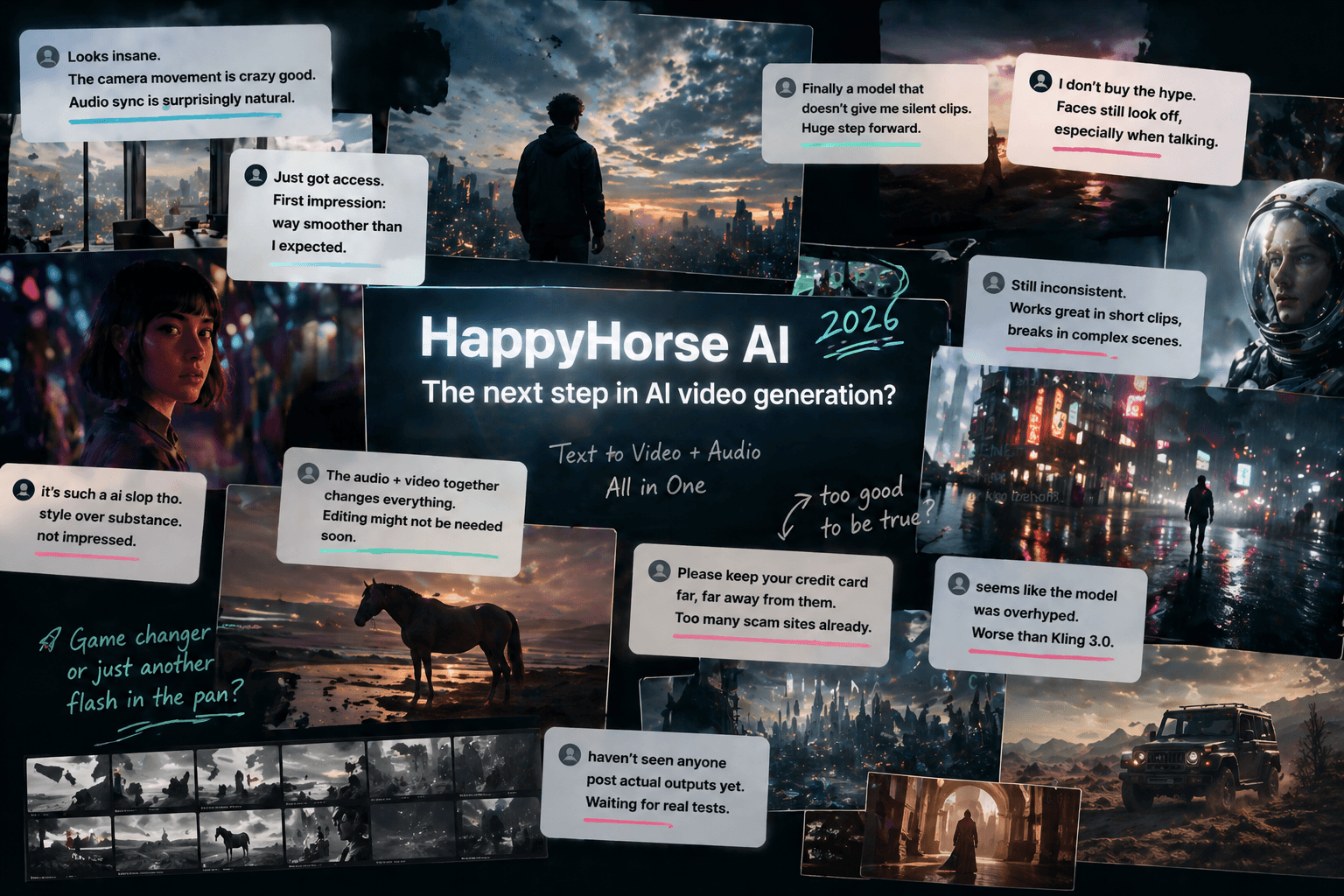

The real reason HappyHorse spread is that it did not behave like a normal launch. Before many people had hands-on access, it was already being discussed as a benchmark surprise, a stealth release, and a model with unclear access paths. One independent review noted that the only place they could test it was an unaffiliated third-party HappyHorse App and that they could not independently confirm the generations came from the underlying model. That ambiguity, fairly or not, became part of the story.

The second reason is social proof, but in a messy, internet-native way. Instead of neat testimonials, the conversation has been full of skepticism, hype, and rumor control. One Reddit user wrote, “there’s a lot of noise floating around,” which is a surprisingly accurate summary of the current information climate around the model.

Another commenter put the access confusion even more bluntly: “if you see a repo claiming to let you download the weights, run away.” That line matters because it shows the audience is not only excited; it is also actively trying to separate real access from opportunistic noise.

A third reaction captures the aesthetic side of the debate: “The horse’s hair and the lighting made my internal Ai detector go off. At the same time tho, the video itself looks realistic, everything seems consistent.” That is probably the most useful one-sentence summary of the model’s appeal: people are noticing both the polish and the artificiality at the same time.

What HappyHorse AI Actually Feels Like Compared with Other Models

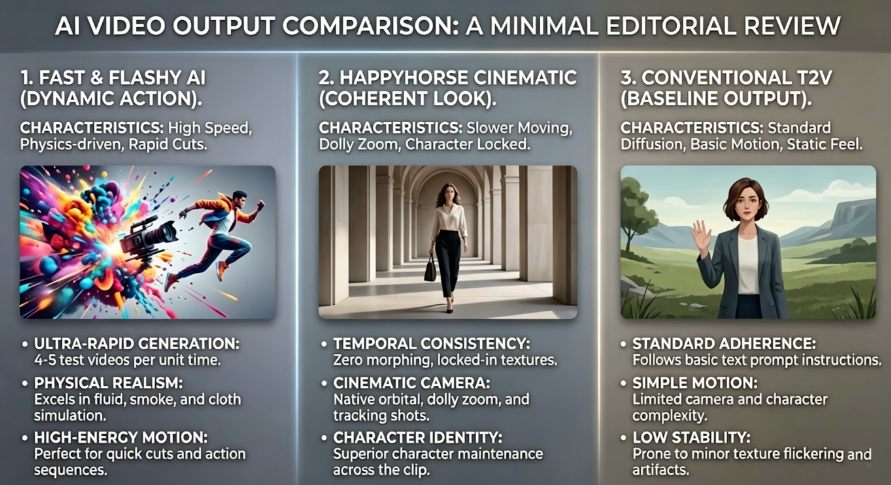

HappyHorse’s strongest advantage is not simply that it can generate video. Plenty of tools can do that. Its advantage is that the outputs appear to be organized around continuity: motion, camera movement, scene rhythm, and audio synchronization are all part of the same promise. The Alibaba Cloud post describes “audio-visual synchronization and multi-shot sequencing,” while fal’s model page describes native audio, prompt-based camera control, and 1080p generation from text or images. That combination makes HappyHorse feel less like a clip generator and more like a compact scene generator.

Still, the model is not magic, and the best review writing should say that plainly. The more interesting angle is that HappyHorse can look almost too clean. Some of the strongest AI video systems now fail in a new way: they are technically impressive but emotionally flat. That is where your article becomes more original than the usual “best features / pros and cons” template. The sharpest criticism is not that the model is weak, but that its cinematic precision can sometimes feel more controlled than alive. That is an inference from the benchmark and access discussions rather than a hard technical claim, but it is exactly the kind of judgment readers come to a review for.

A Simple Way to Frame the Comparison

If you want this article to feel useful rather than generic, do not compare models by dumping features. Compare them by creative intent.

| What the creator cares about most | HappyHorse | Seedance 2.0 | Kling 3.0 |

|---|---|---|---|

| Cinematic scene feel | Strong | Strong | Strong |

| Ranking momentum | Very strong | Very strong | Strong |

| Audio/video integration | Strong emphasis | Strong | Strong |

| Access clarity | Still a little messy | More familiar | More familiar |

| Best use case | Short, polished, story-like scenes | Broad creative testing | Flexible general video generation |

That table works because it helps the reader answer a practical question: which model feels closest to the job they are trying to do?

Access, Pricing, and the Practical Side

This part matters because hype means nothing if people cannot actually try the model. Alibaba Cloud says HappyHorse 1.0 is available through the official website, Alibaba Cloud Model Studio, and Qwen App. fal’s API pages also show a more developer-oriented route, with 1080p output, native audio, and a pricing example of $0.28 per second at 1080p, which works out to $2.80 for a 10-second clip on that listing. An independent review of the web app reported a much more expensive third-party experience, roughly $4 in credits for a 5-second Pro generation with audio, so access route clearly matters.

That access detail is worth spelling out in the article because it protects you from sounding naïve. A lot of AI readers now know that a model’s public narrative, API pricing, and third-party access experience can all be very different things. HappyHorse is a perfect example of that gap.

Final Verdict

HappyHorse AI matters less because it is perfect and more because it changed the conversation. It pushed AI video discussion away from “look, a cool clip” and toward continuity, motion control, and whether a generated sequence can actually feel directed. On current benchmark pages, it is clearly competitive across text-to-video and image-to-video, especially in no-audio settings, and it is close enough in audio-enabled rankings to stay in the center of the discussion. That is already enough to make it one of the most interesting AI video models of 2026.

The best way to end the article is not with a sales pitch, but with a judgment: HappyHorse is not just another model launch. It is a sign that AI video is becoming less about isolated spectacle and more about scene-making. That shift is what makes the whole story worth reading.

Go to WeShop AI For Exploration: