One of the strangest things about AI video right now is this: the models keep getting better, yet the surrounding rules keep getting tighter. xAI’s video system is clearly built as a real creative pipeline, not a toy feature. Its docs describe text-to-video, image-to-video, video editing, and video extension, along with asynchronous processing and controls for duration, aspect ratio, and resolution. In other words, Grok video sits inside a production workflow, not a one-click novelty.

Why “Video Moderated” Matters More Than It Sounds

At first glance, “video moderated” sounds like a simple error message. In practice, it points to something bigger. xAI’s docs say a video request can return an invalid_argument error when moderation blocks the content, which means the system is not merely failing; it is actively enforcing boundaries.

That matters because the message tells you where AI video is headed. We are no longer in a phase where the only question is whether a model can generate a clip. Now the bigger questions are who reviews the output, what the platform considers unacceptable, and how much creative ambiguity the system will tolerate. xAI’s Acceptable Use Policy makes that direction explicit: users must follow the law, avoid harmful use, and respect restrictions around privacy, copyright, sexual content involving people, and attempts to bypass safeguards.

The Real Story Behind the Restriction

Moderation Is Part of the Product, Not an Accident

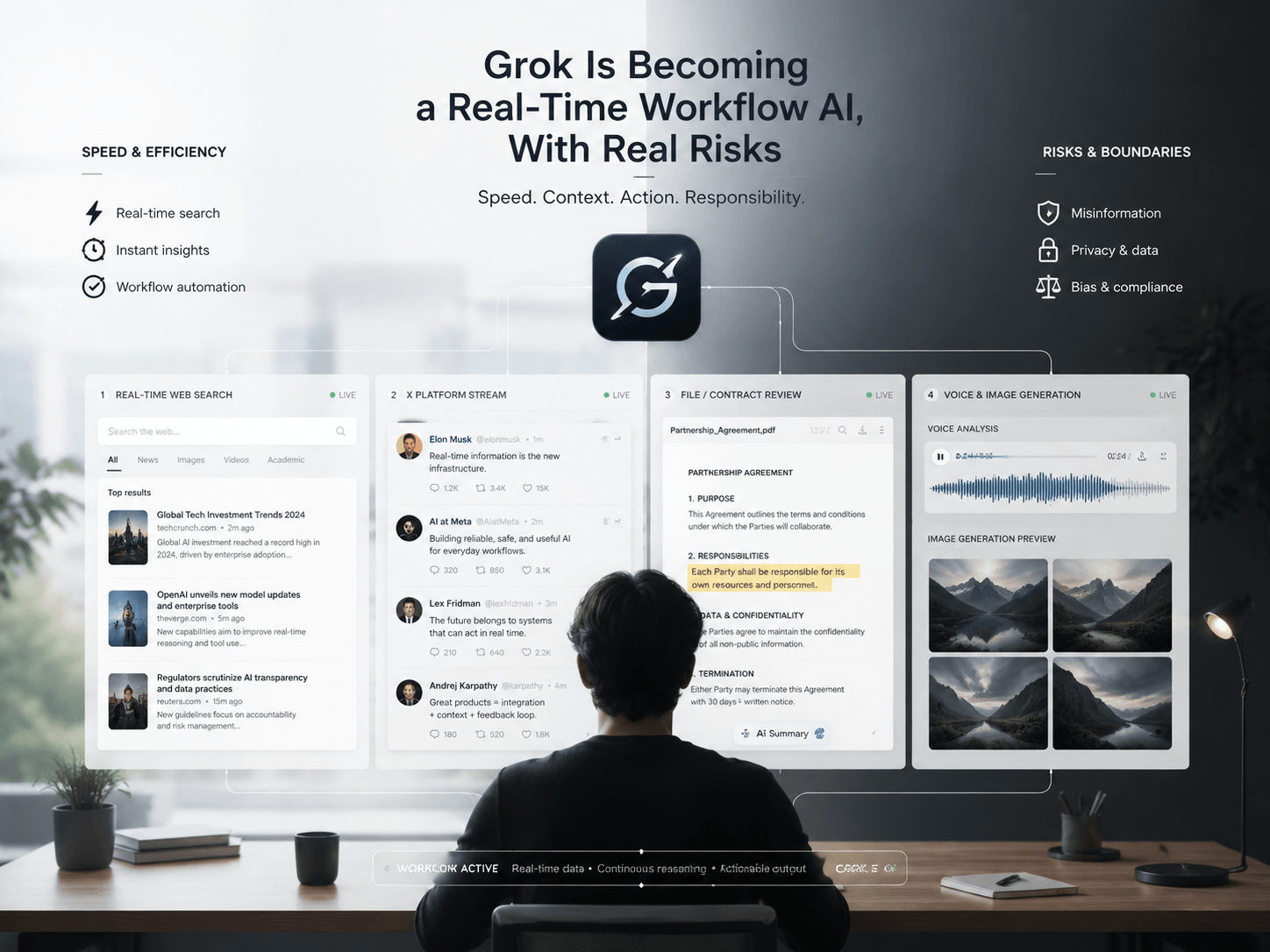

A lot of blog posts treat Grok video moderation like a support issue. That framing is too small. xAI presents Grok Imagine as an end-to-end creative stack with generation, editing, scene control, object control, restyling, and image-to-video workflows. Once a system becomes that integrated, moderation stops looking like a separate filter and starts looking like part of the product’s design language.

This is where the topic becomes interesting. If the platform rejects certain prompts, it does more than protect users. It also shapes the kind of visuals people learn to make. Over time, moderation can push output toward safer, cleaner, more generic aesthetics. That is an inference, but it follows directly from the fact that the platform applies policy constraints during generation rather than leaving every decision to the user.

Why Creators Feel the Friction

The most frustrating part is not always the rejection itself. It is the inconsistency. In community discussions on Reddit, users report prompts that run almost to completion before getting blocked, and they describe cases where similar prompts pass one moment and fail the next. Those are anecdotal reports, not official policy statements, but they capture the lived experience of moderation better than a generic FAQ ever could.

That inconsistency changes the emotional tone of the tool. A hard no feels simple. A late-stage rejection feels costly, because it wastes time and breaks momentum. As a result, the phrase “video moderated” starts to feel less like a technical message and more like a creative frustration. The system does not just block output; it interrupts the rhythm of making.

What This Says About the Future of AI Video

We Are Entering the Platform Era

The deeper shift is easy to miss. xAI now describes video as a structured, asynchronous workflow with multiple modes rather than a single prompt-and-go feature. That tells you where the category is moving: AI video is becoming platform software, and platform software always comes with rules, review layers, and policy trade-offs.

At the same time, xAI’s documentation around image generation also makes clear that outputs stay subject to policy review. That consistency across media types suggests a broader product philosophy: generation quality matters, but moderation and safety now sit inside the workflow itself.

Why This Keyword Deserves a Better Article

That is why “Grok video moderated” deserves more than a basic troubleshooting post. The phrase is small, but the implications are large. It reflects a new phase in AI video where the central issue is no longer just what the model can create. Instead, the real question is what the platform allows, how clearly it communicates that boundary, and how those rules reshape the creative process.

The best way to write about this topic is not to explain the message as if it were a bug report. Instead, treat it as a signal. It shows that AI video has moved beyond raw generation and into a world shaped by moderation, policy, and product design. That shift is much more interesting than the error itself.

Final Thought

The interesting thing about moderation is that it never stays technical for long. Once a creative tool starts deciding what it will not generate, it also starts shaping style, expectation, and workflow. That is the real story behind Grok video moderated, and that is exactly why this keyword can become a strong editorial piece instead of just another search-page filler.

Go to WeShop AI For Exploration: