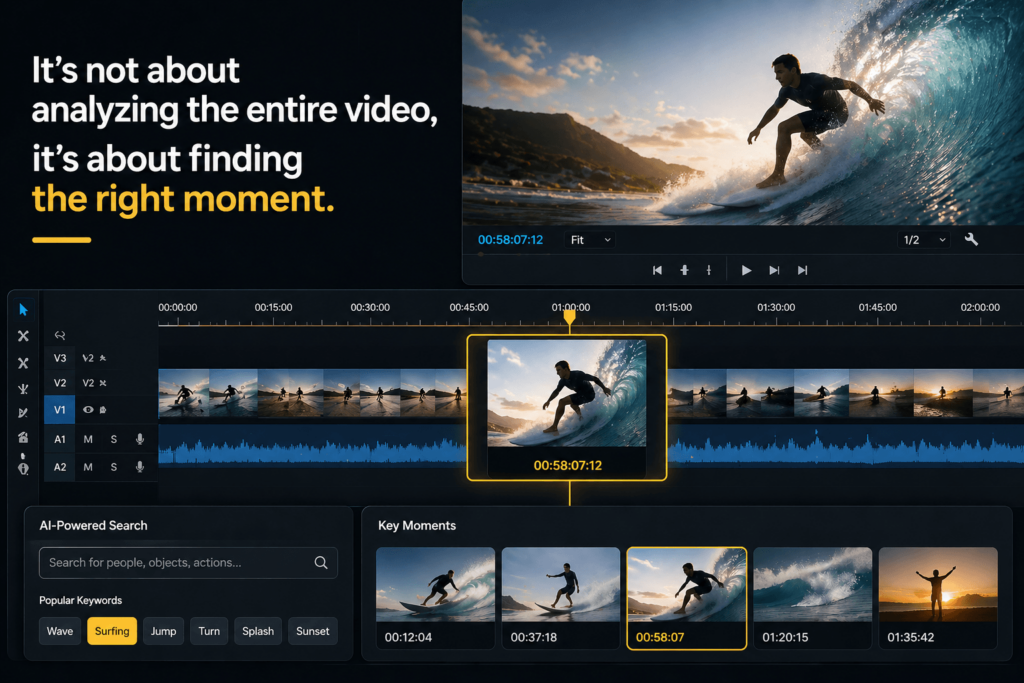

There is a version of the AI video analyzer story that sounds impressive and still misses the point.

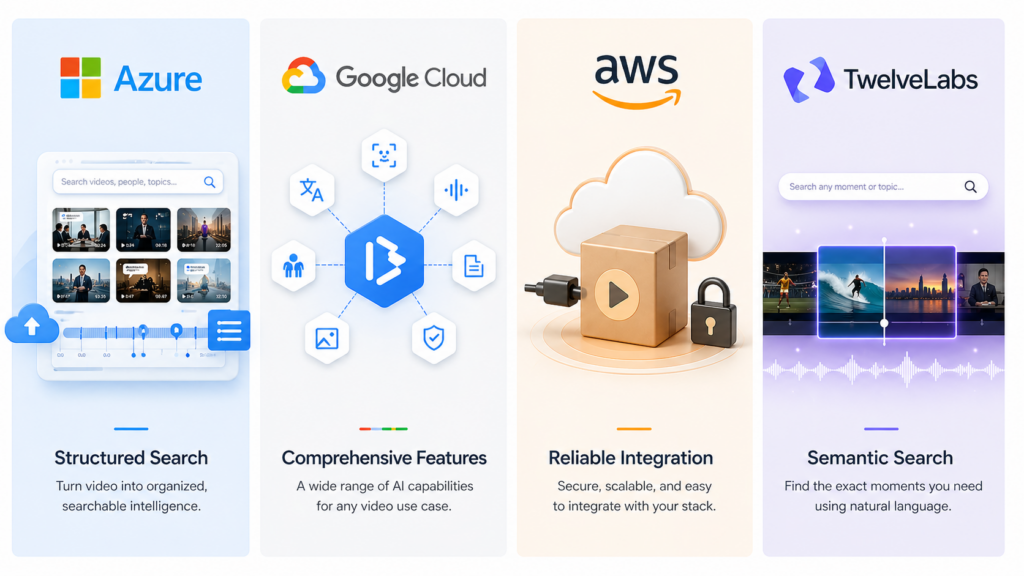

It says the tool can detect faces, transcribe speech, label objects, and summarize scenes. That is all true. But in practice, most people do not need AI to “watch” video for them. They need it to tell them where to look. That is the real job. Azure AI Video Indexer, Google Cloud Video Intelligence API, Amazon Rekognition Video, and TwelveLabs all sit in this space, but they approach the problem differently.

The market looks bigger than it is

At a glance, the category seems huge. In reality, it splits into two clear ideas.

One group is classic cloud video intelligence: detect labels, track people, transcribe speech, find text, and turn video into metadata. That is the model behind Azure AI Video Indexer, Google Cloud Video Intelligence API, and Amazon Rekognition Video. Another group is moving closer to semantic video search, where the tool is less about counting objects and more about helping users ask questions in plain language. TwelveLabs fits that newer direction.

What this means in practice

This difference matters because “video analysis” is not one thing. Sometimes the goal is compliance or archiving. Sometimes it is editing. Sometimes it is search. And sometimes it is just finding one good clip from hours of footage. The best tool depends on which of those problems you are actually trying to solve.

Azure AI Video Indexer feels built for teams, not hobby projects

Microsoft positions Azure AI Video Indexer as a cloud and edge video analytics service that extracts actionable insights from stored videos. It supports search across people, projects, visual text, spoken words, entities, and topics, and it is available through a web portal, widget, and REST API. That makes it feel like a product designed for workflows, not just demos.

What users like

On G2, one reviewer says, “Indexing the video is great and has scalable cloud enabled features.” That is a very specific kind of praise. It is not emotional. It is operational. It sounds like someone who actually needs the system to fit into a larger pipeline.

What users dislike

The same G2 page also shows a clear complaint: “The pricing is quite expensive, also its dependency on cloud requires stable internet.” That matters because it tells you exactly where the product starts to hurt. If your video workflow is cloud-friendly, Azure can feel strong. If you need something lighter, the cost and dependency can become the first thing people notice.

Google Cloud Video Intelligence API is broad, but speed shows up in the reviews

Google’s feature list is straightforward: explicit content detection, face detection, label detection, logo recognition, object tracking, person detection, shot change detection, speech transcription, and text detection. If you want a clean example of what a conventional AI video analyzer can do, Google gives you one of the widest official lists.

What users like

The review summary on G2 says users consistently praise the ease of use and the ability to quickly search through videos. That fits the product’s appeal pretty well. It is useful when the problem is organization and retrieval.

What users dislike

But the same page includes a much harsher line from a reviewer: “Extremely slow.” The reviewer adds that it is “too slow to use for professional projects” and says videos had to be split up and reviewed in parts. That kind of feedback changes the story. It suggests that a feature-rich analyzer is only useful if the workflow is fast enough to support real work.

Amazon Rekognition Video is the least flashy and maybe the easiest to trust

AWS documents Rekognition Video as a service that can detect labels in video and track people in stored video. It is asynchronous, API-driven, and clearly built for integration. That is not glamorous, but it is often exactly what teams need.

What users like

TrustRadius gives a good, plain-English snapshot. One reviewer says, “Easy to use framework, just make API calls.” The same review adds, “The accuracy is very good that we can rely on it.” That is the kind of feedback that helps readers trust the product for practical work, especially when the goal is dependable automation rather than novelty.

What users dislike

The same reviewer also notes that the cost is higher for small companies. That is useful to mention because many AI video tools look reasonable in a demo and expensive at scale. AWS feels sturdy here, but not necessarily cheap.

TwelveLabs points to where the category is going

TwelveLabs is interesting because it is not just trying to label video. Its docs focus on search, summaries, chapters, and highlights, which pushes the product closer to “find the moment” than “describe the frame.” That is a meaningful shift.

What users say

On Product Hunt, a reviewer says TwelveLabs is “seamlessly powering all the deep video analysis for complex editing.” There is only one visible review on the page right now, so the public sample is small. Even so, the wording is useful because it matches the company’s direction: less about static detection, more about useful retrieval and editing.

My take

TwelveLabs feels like the right example when you want to argue that the future of video analysis is not about replacing human judgment. It is about narrowing the search space fast enough that human judgment becomes practical again.

The real comparison is not features. It is friction.

That is the part most blog posts miss.

Azure feels strongest when video analysis is part of a larger content workflow. Google offers a broad feature set, but public users have complained about speed. AWS looks dependable and integration-friendly. TwelveLabs is the boldest attempt to make video behave more like searchable knowledge than raw media.

The simplest way to choose

If you need searchable metadata, Azure is easy to justify. If you need a wide detection toolkit, Google is a solid reference. If you want an API-first service inside an AWS stack, Rekognition is the safe bet. If you want semantic search over video, TwelveLabs is the most future-facing option.

A useful ending is not a big promise

AI video analysis is not valuable because it sounds advanced.

It is valuable when it helps someone find the exact second they need, without watching everything around it. That sounds small, but it is the difference between a feature people try once and a tool people keep using.

Go to WeShop AI For Exploration: