What Happens When You Remove the Workflow

HappyHorse AI is not trying to improve video generation step by step. It is trying to remove the steps entirely.

Instead of generating a silent clip that needs editing, voiceover, and syncing, it produces video and audio together in a single pass. Dialogue, ambient sound, and motion are generated as one piece. The result feels less like raw material and more like something already assembled.

That shift sounds subtle, but it changes how you evaluate the output. You are no longer asking whether the visuals are good. You are asking whether the result is usable as-is.

Task 1: Can It Produce a Usable Video Ad?

I started with a simple test: generate a short product ad with voiceover and background sound.

The first impression was surprisingly strong. The clip arrived with sound already in place. There was no need to imagine the final version or layer additional elements. It felt complete in a way most AI video outputs still do not.

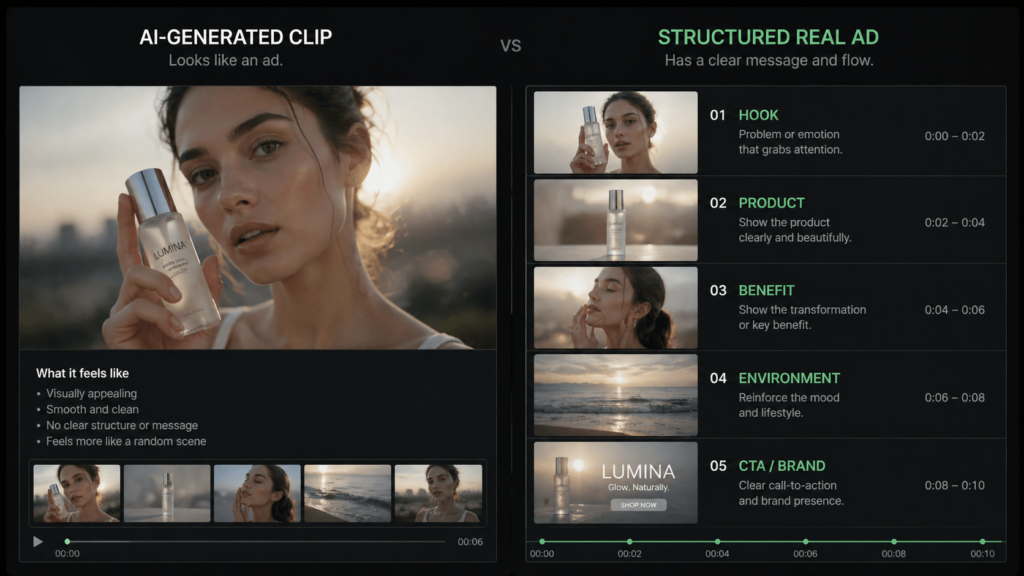

But that sense of completeness does not automatically translate into usability.

The pacing was slightly off, as if the timing missed the intended beat. The voice existed, but it did not fully match the tone of the visuals. More importantly, the structure of an actual ad was missing. It looked like a scene that resembled advertising, rather than a piece designed to persuade.

That gap shows up often with this model. It can generate something that looks finished, but it does not yet understand what the piece is supposed to achieve.

Task 2: Dialogue Feels Right—Until It Doesn’t

One of the headline features is synchronized speech and lip movement. On a technical level, it works. The timing aligns, the voice is present, and the output feels coherent at a glance.

But the longer you watch, the more something feels off.

The issue is not synchronization. It is expression. Lines are delivered correctly, but without enough emotional weight. The model can speak, but it does not always communicate.

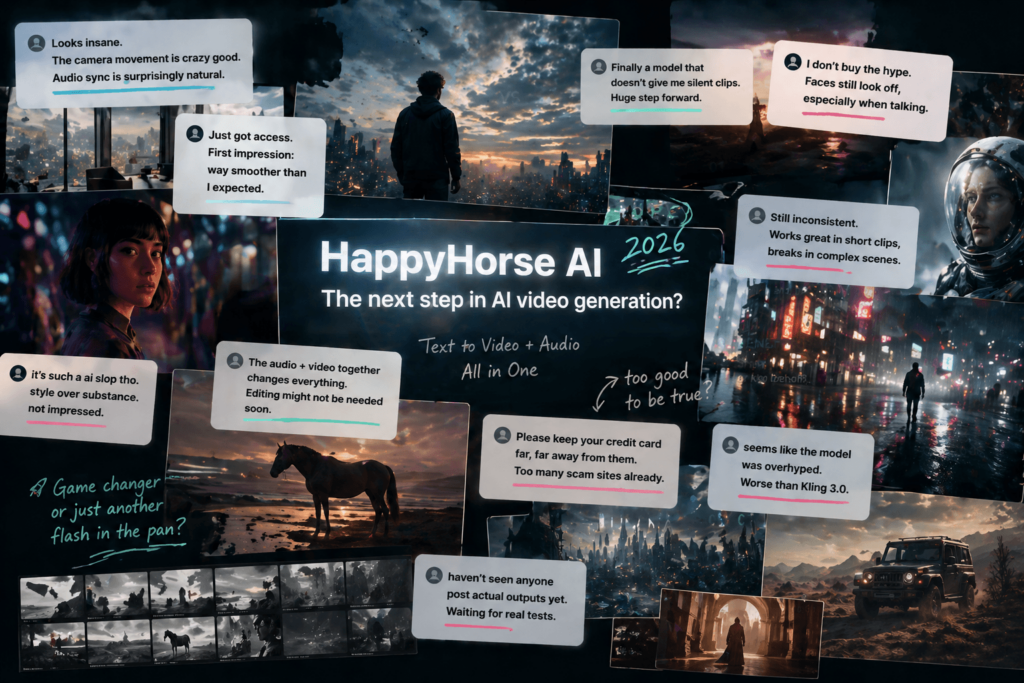

This is exactly where user reactions start to diverge.

Some early viewers describe the output as impressive at first glance. Others are much less convinced. One comment captures that skepticism bluntly:

“it’s such a ai slop tho.”

That contrast is important. The same clip can feel advanced and unconvincing at the same time, depending on what you are looking for.

Why Audio + Video Generation Changes More Than It Seems

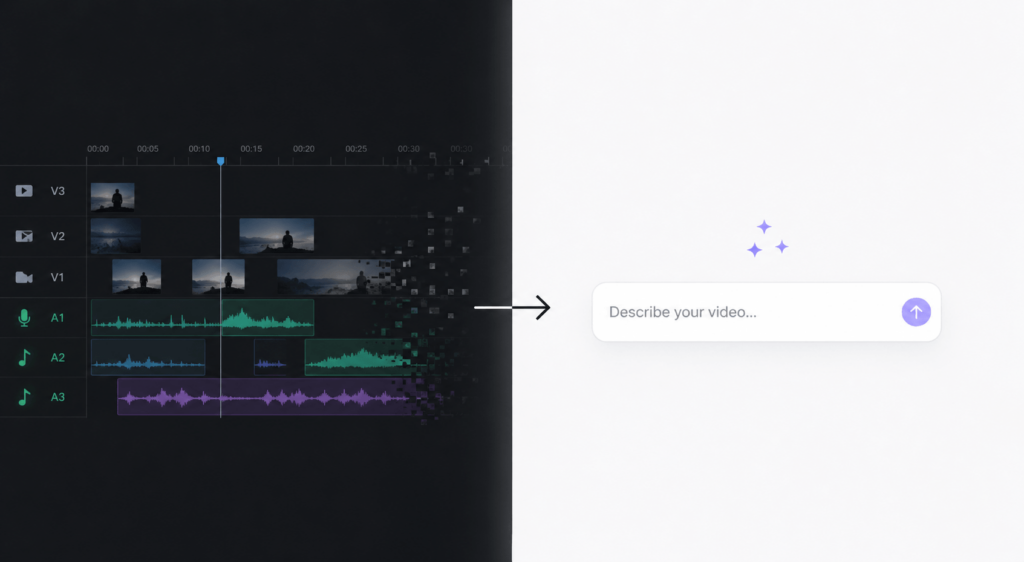

Most discussions treat audio-video generation as just another feature. It is more useful to see it as a shift in process.

Traditional workflows are layered. You generate visuals, then add sound, then adjust timing. Each step allows for intervention. Each step also adds friction.

HappyHorse compresses all of that into a single generation step.

That compression removes effort, but it also removes control. If something feels off, there is no clean way to fix just one part. You regenerate the entire clip.

This tradeoff is part of why reactions feel inconsistent. Some people value the speed. Others are frustrated by the lack of control.

In fact, part of the skepticism is not about output quality at all. It is about whether people are even seeing real usage yet. As one user put it:

“haven’t seen anyone post actual outputs yet.”

That uncertainty shapes how the model is perceived just as much as the technology itself.

What Real Users Are Actually Reacting To

If you look across discussions, the split is not random. It follows a pattern.

On one side, there is genuine curiosity. People see a model that reduces friction and produces something closer to a finished clip. That alone makes it feel like progress.

On the other side, there is hesitation. Not just about quality, but about trust. Access is limited, and many claims come from demos rather than consistent hands-on use.

That leads to a different kind of concern altogether:

“Please keep your credit card far, far away from them.”

That comment is not about rendering quality. It is about the ecosystem forming around the model—third-party sites, unclear access points, and the risk of paying for something that is not fully available.

So the conversation shifts. It is no longer just “Is this model good?”

It becomes “Is this model even usable right now?”

Where It Still Falls Apart

Once you move beyond simple prompts, the limitations appear quickly.

Longer sequences lose consistency. Multi-character scenes become unstable. More detailed instructions tend to reduce reliability instead of improving it.

This is where expectations need to adjust. The model performs best with simple, controlled scenarios. It struggles when asked to handle layered intent.

Some users go further and question the overall positioning. One comment puts it directly:

“seems like the model was overhyped. Worse than Kling 3.0.”

Whether or not that comparison is fair, it reflects a real sentiment. Expectations rose quickly, and the current experience does not always match them.

Who This Is Actually For

At this stage, the value of HappyHorse depends heavily on how you plan to use it.

If your work benefits from speed and iteration, the model already has practical use. It can generate quick scenes, rough concepts, and short-form content without the usual setup.

If your work depends on precision and consistency, the current limitations will be difficult to work around. The lack of control becomes a bottleneck rather than a benefit.

If you are thinking about tools and workflows, this model is worth paying attention to regardless of its current flaws. It points clearly toward a direction where fewer steps are involved in creating video.

Final Thought: This Is Not About Better Video

It is easy to evaluate HappyHorse AI in terms of quality. That misses what makes it interesting.

The real shift is not in how good the video looks. It is in how little process is required to produce it.

Right now, the model is not stable enough to replace existing workflows. It is not consistent enough for production use. And it is not accessible enough for most people to fully test.

But it introduces a different expectation.

If video can be generated as a single step, then the workflow around video starts to feel less necessary. Even in its current state, that idea is hard to ignore.

Go to WeShop AI For Exploration: