The tester had been through this before. Upload a garment photo, type a prompt, cross your fingers, hit generate, then spend twenty minutes regenerating because the model’s face drifted, the color shifted, or the garment morphed into something the designer wouldn’t recognize. But this time — with ByteDance’s Doubao 4.0 model — the first generation landed. No lottery. No re-rolls. One prompt, one perfect output. The consistency problem that had plagued every AI virtual try-on tool for years had been solved in a single model update.

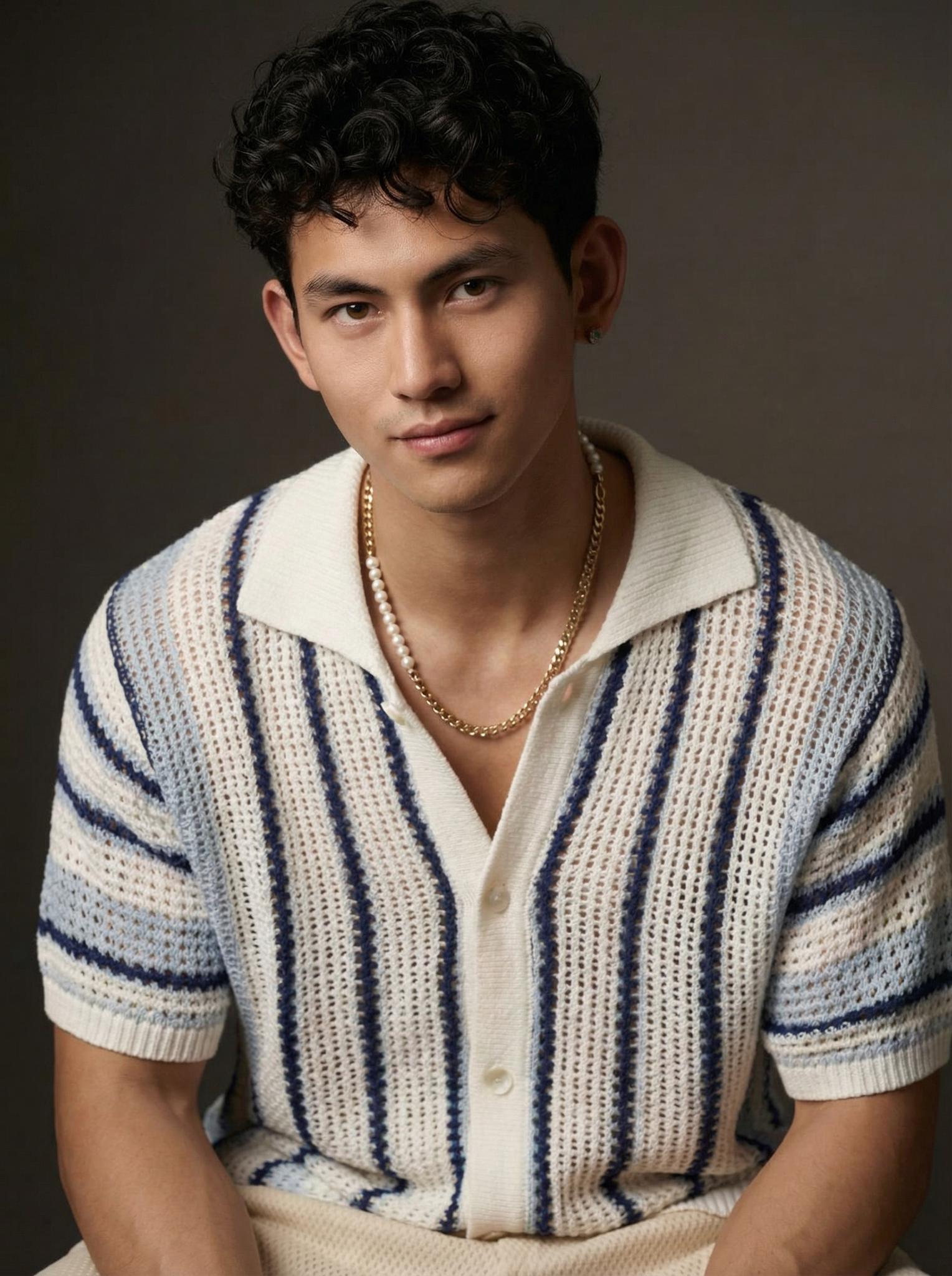

Left: Source garment | Right: AI-generated model shot — first attempt, no re-rolls

The Consistency Breakthrough: Why “First-Shot Accuracy” Changes Everything

In the world of AI image generation, there’s a metric that matters more than raw quality: first-shot accuracy. It’s the percentage of generations that are usable without regeneration. For early virtual try-on tools, this number hovered around 15-20% — meaning you’d generate 5-7 images to get one good one. For the current generation of specialized tools, it’s climbed to 60-70%. But the latest models — trained on massive fashion-specific datasets with consistency-focused loss functions — are pushing 90%+.

This isn’t just a convenience improvement. It fundamentally changes the economics of AI-generated fashion content. When 9 out of 10 generations are usable, you stop thinking of AI as a “slot machine” and start thinking of it as a “camera” — a reliable tool that produces predictable output. That mental shift is what drives adoption from experimental to production.

For e-commerce sellers processing hundreds of SKUs, the difference between 20% and 90% first-shot accuracy is the difference between AI being a curiosity and AI being a workflow replacement. At 20%, you still need a human reviewer spending significant time curating outputs. At 90%, the human reviews for exceptions rather than the norm.

The Science Behind First-Shot Consistency: Architecture Innovations in 2026

Three architectural innovations converge to produce the consistency leap we’re seeing in 2026-generation models.

1. Identity-Preserving Attention (IPA)

Traditional diffusion models treat the garment and the model as separate conditioning signals. IPA introduces cross-reference attention layers that explicitly link garment regions to corresponding body regions throughout the entire denoising process. The collar of the input garment maintains a direct attention pathway to the collar area of the output, ensuring spatial correspondence that previous architectures achieved only probabilistically.

2. Consistency-Weighted Training Loss

Earlier models were trained to produce “good-looking” images — optimizing for perceptual quality metrics like FID and LPIPS. Current models add an explicit consistency loss that penalizes deviations between the input garment’s color histogram, pattern frequency spectrum, and edge structure compared to the output garment. This dual optimization produces outputs that are both aesthetically pleasing and faithful to the source material.

3. Multi-Scene Generation via Shared Latent Anchors

The ability to generate the same garment on the same model across multiple scenes — different backgrounds, lighting, and poses — without the garment changing appearance between scenes. This is achieved through shared latent anchors: a fixed encoding of the garment that persists across all generations in a batch, ensuring that the red dress is the same shade of red whether the model is in a park or a studio.

Practical Impact: What Sellers Can Do Now That They Couldn’t Before

Multi-Scene Product Galleries in One Session

Generate 8-10 images of the same garment across different backgrounds and poses in a single batch. The consistency ensures the product looks identical across all images — critical for product listings where customers compare multiple views.

One-Prompt Outfit Changes

Describe the outfit change in natural language: “Same model, same location, swap the blue jacket for a red one.” The model understands the intent and produces a coherent swap. Previously, this required re-generating the entire image from scratch, often with inconsistent results.

Pose Variation Without Identity Drift

Generate the same model wearing the same garment in 5 different poses. The model’s face, body proportions, and the garment’s appearance remain consistent across all poses. This was the most-requested feature from professional users, and it’s now achievable with high reliability.

Notice the consistency of the garment rendering across what would be a pose variation series — the fabric weight, the hem position, the way the material catches light all remain anchored to the same physical properties, as if photographed by a real camera rather than imagined by an algorithm.

Actionable Scene Guide: Maximizing First-Shot Accuracy

Prompt Engineering for Consistency

- Be specific about the garment: “navy blue cotton button-down shirt” beats “a shirt.” Material, color, and style keywords anchor the generation.

- Describe the model once, reuse: Create a detailed model description and paste it across all prompts for a batch. This locks identity.

- Avoid conflicting instructions: “Casual pose in a formal setting” confuses the model. Keep mood, pose, and setting aligned.

Batch Processing for Consistency

- Generate all variations of one garment before moving to the next. This keeps the garment encoding in the model’s attention cache.

- Process similar garment categories together (all shirts, then all trousers). The model performs better within category than across categories.

Quality Control Checklist

- ✅ Color match: Compare garment color in output vs. source (use eyedropper tool)

- ✅ Pattern integrity: Are stripes straight? Are prints recognizable?

- ✅ Silhouette accuracy: Does the neckline/hemline/sleeve length match?

- ✅ Physics plausibility: Does the fabric drape naturally for its weight?

Expert Consulting FAQ

Q1: How does Doubao 4.0’s virtual try-on compare to Kolors and Flux?

Doubao 4.0 excels at consistency — first-shot accuracy and cross-scene coherence. Kolors maintains an edge in artistic quality for editorial-style outputs. Flux offers the most control over generation parameters. For production e-commerce use, consistency matters most, giving Doubao 4.0 a practical advantage.

Q2: Can these new models handle text on garments — brand logos, graphic tees?

Improving but not solved. Simple text (1-2 words in large font) renders correctly about 60% of the time. Complex text, small fonts, and non-Latin scripts remain unreliable. For branded merchandise, this is still a significant limitation.

Q3: What hardware do I need to run these models locally?

Production-quality virtual try-on models require 16GB+ VRAM GPUs (RTX 4080 or better). Cloud-based solutions eliminate hardware requirements entirely — most professional tools run inference on their own GPU clusters, so you only need a web browser and an internet connection.

Q4: How quickly are these models improving? Should I wait for the next generation?

Major architecture updates arrive every 6-9 months. But waiting is a false economy — the content you produce today generates SEO value, sales, and brand presence that compounds over time. Use today’s tools now; upgrade to tomorrow’s tools when they arrive.

Q5: Are there intellectual property concerns with AI-generated fashion model photos?

AI-generated models don’t use real people’s likenesses (unless explicitly prompted), so model release forms aren’t required. The garment IP belongs to the brand/designer. The generated image’s copyright status varies by jurisdiction but is generally treated as the user’s work product when created through a commercial tool.